Building Free GitHub Copilot Alternative with Continue + GPUStack

Seal

Seal

Continue is an open-source alternative to GitHub Copilot, this is an open-source AI coding assistant that allows to connect various large language models(LLMs) within VS Code and JetBrains to build custom code autocompletion and chat capabilities. It supports:

Code parsing

Code autocompletion

Code optimization suggestions

Code refactoring

Code implementations Inquiring

Documentation online searching

Terminal errors parsing

and more. It assists developers in coding and enhancing their development efficiency.

In this tutorial, we are going to use Continue + GPUStack to build a free GitHub Copilot locally, providing developers with an AI-paired programming experience.

Running Models with GPUStack

First, we will deploy the models on GPUStack. There are three model types recommended by Continue:

Chat model: select

llama3.1, this is the latest open-source model trained by Meta.Autocompletion model: select

starcoder2:3b, a highly advanced autocompletion model trained by Hugging Face.Embedding model: select

nomic-embed-text, which supports a context length of 8192 tokens, it outperforms OpenAI ada-002 and text-embedding-3-small models for both short and long context tasks.

After deploying the models, you are also required to create an API key in the API Keys section for authentication when Continue accesses the models deployed on GPUStack.

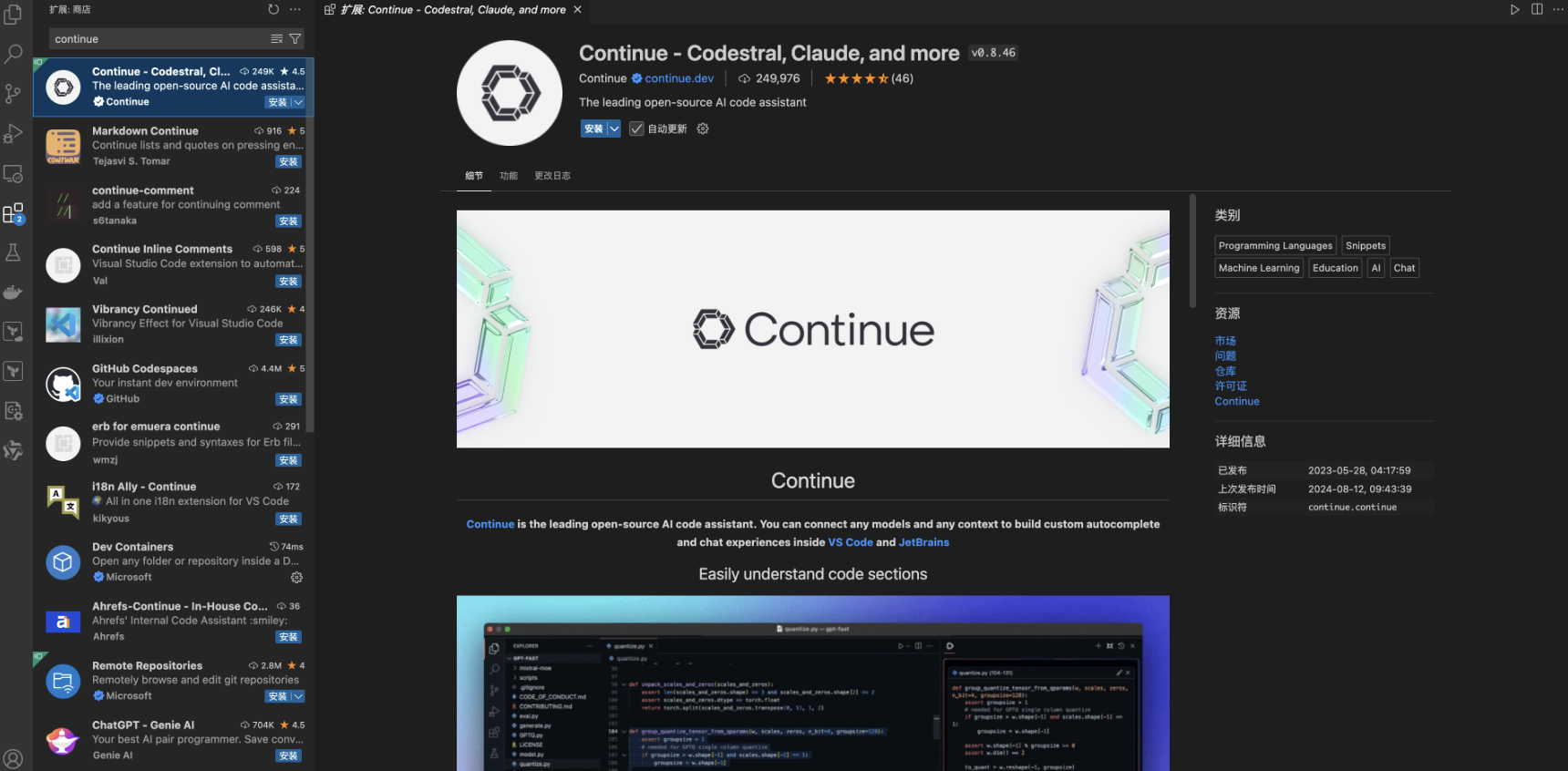

Installing and Configuring Continue

Continue provides extensions for both VS Code and JetBrains. In this article, we will use VS Code as an example. Install Continue from the VS Code extension store:

Once installed, drag the Continue extension to the right panel to avoid conflict with the file explorer:

Then, select the settings button in the bottom-right corner to edit Continue's configuration and connect to the models deployed on GPUStack. Replace the sections for "models", "tabAutocompleteModel", and "embeddingsProvider" with your own GPUStack-generated API Key:

{

"models": [

{

"title": "Llama 3.1",

"provider": "openai",

"model": "llama3.1",

"apiBase": "http://192.168.50.4/v1-openai",

"apiKey": "gpustack_f58451c1c04d8f14_c7e8fb2213af93062b4e87fa3c319005"

}

],

"tabAutocompleteModel": {

"title": "Starcoder 2 3b",

"provider": "openai",

"model": "starcoder2",

"apiBase": "http://192.168.50.4/v1-openai",

"apiKey": "gpustack_f58451c1c04d8f14_c7e8fb2213af93062b4e87fa3c319005"

},

"embeddingsProvider": {

"provider": "openai",

"model": "nomic-embed-text",

"apiBase": "http://192.168.50.4/v1-openai",

"apiKey": "gpustack_f58451c1c04d8f14_c7e8fb2213af93062b4e87fa3c319005"

}

}

Get to Use Continue

After configuring Continue to connect to the GPUStack-deployed models, go to the top-right corner of the Continue plugin interface and select Llama 3.1 model. Now you are able to use the features we mentioned at the beginning of this tutorial:

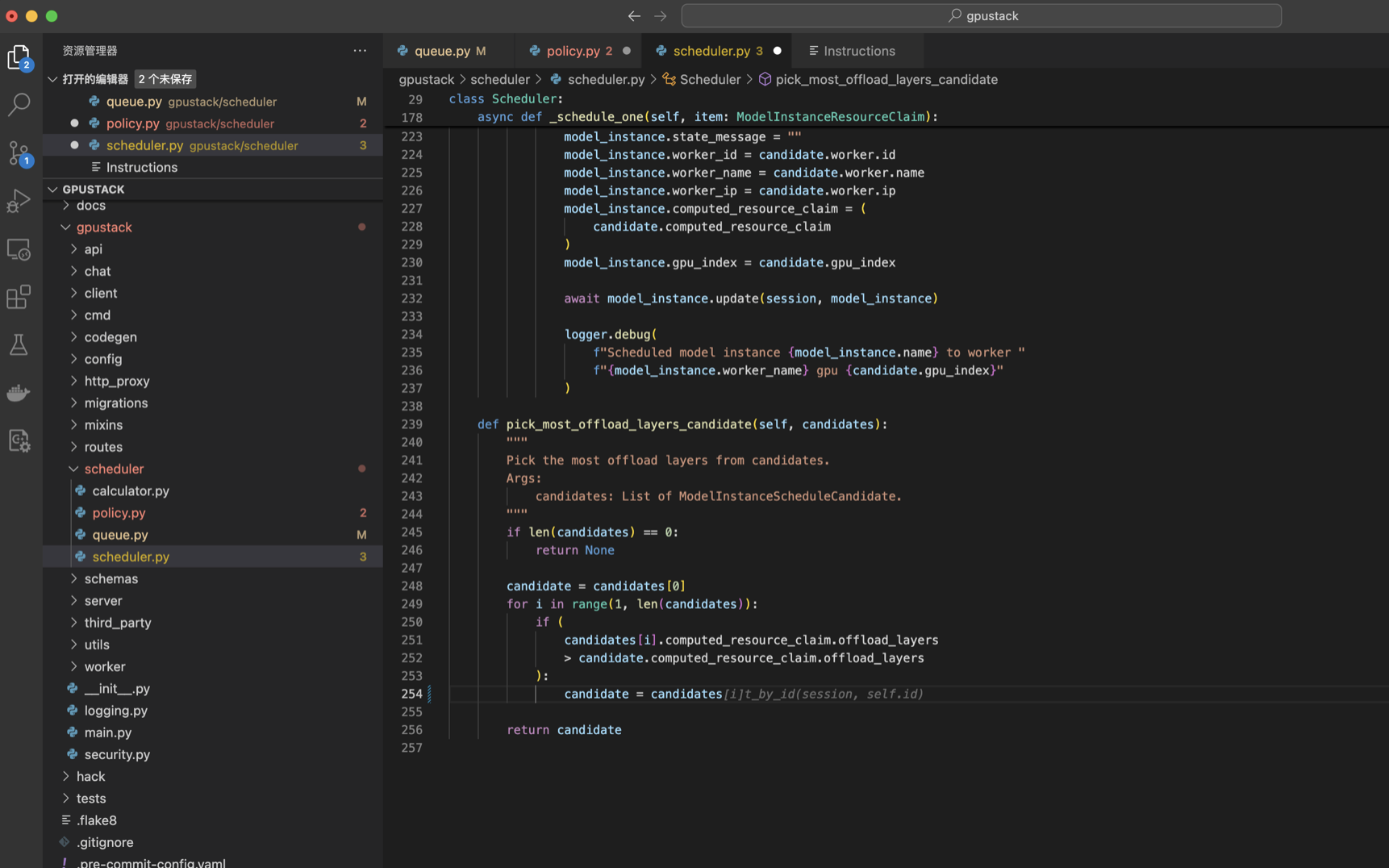

- Code Parsing: Select the code, press

Cmd/Ctrl + L, and enter a prompt to let the local LLM parse the code:

- Code Autocompletion: While coding, press

Tabto let the local LLM attempt to autocomplete the code:

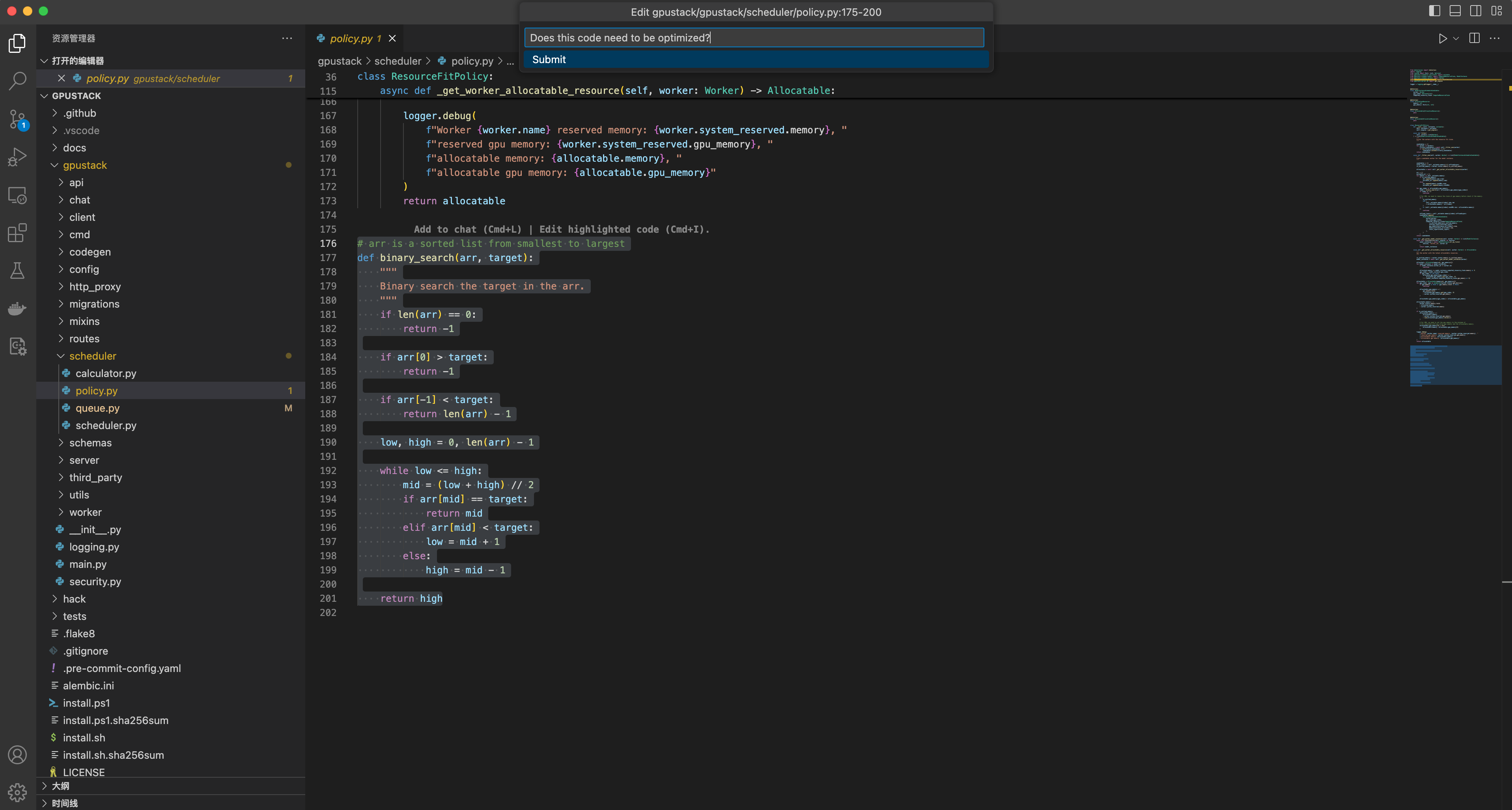

- Code Refactoring: Select the code, press

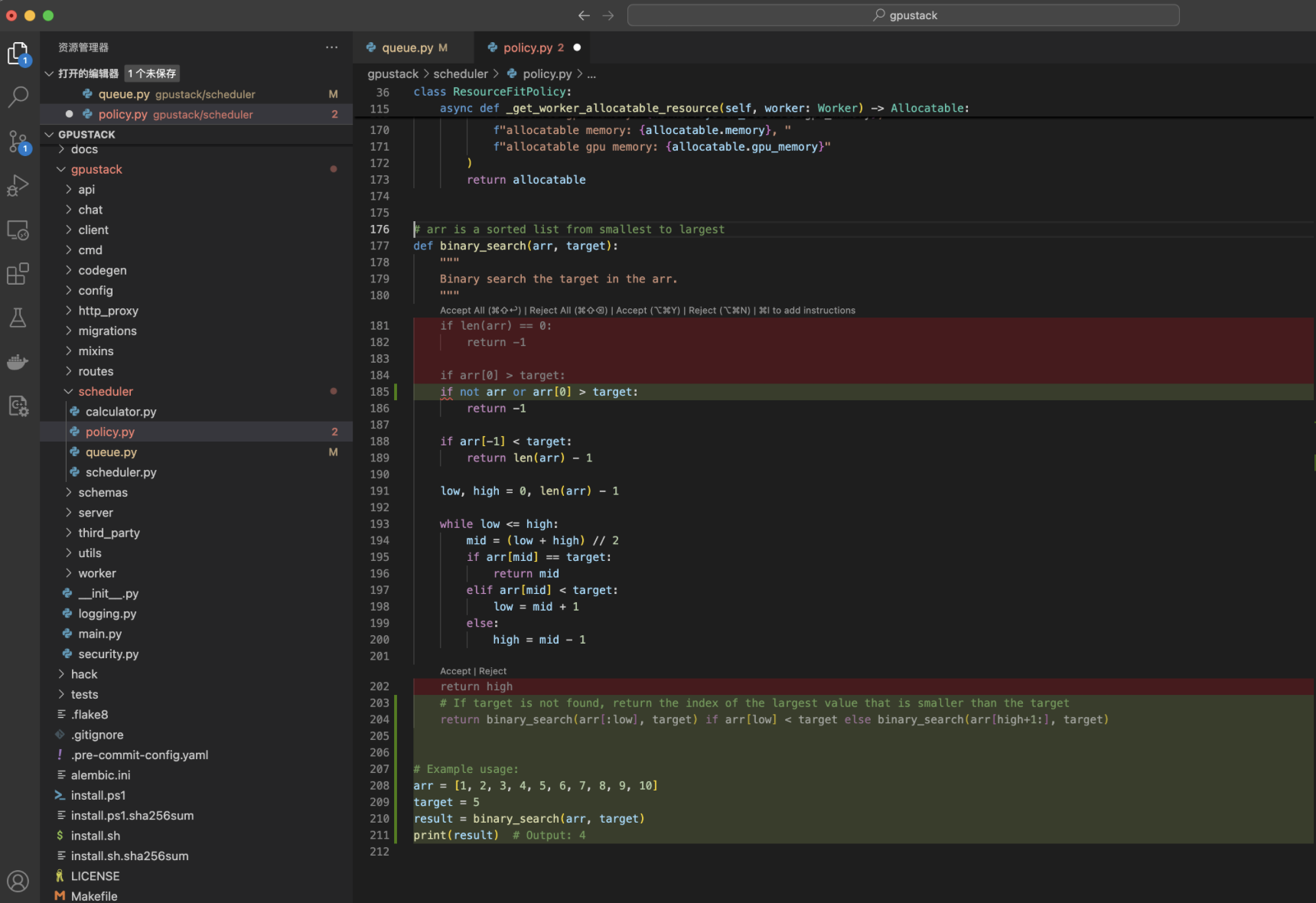

Cmd/Ctrl + I, and enter a prompt to let the local LLM attempt to optimize the code:

The LLM will provide suggestions, and you can decide whether to accept or reject them:

- Inquire About Code Implementation: You can try

@Codebaseto ask questions about the codebase, such as how a certain feature is implemented:

- Documentation Search: Use

@Docsand select the document site you wish to search for and ask your questions, enabling you to find the results you need:

For more information, please read the official Continue documentation: https://docs.continue.dev/how-to-use-continue

Conclusion

In this tutorial, we have introduced how to use Continue + GPUStack to build a free local GitHub Copilot, offering AI-paired programming capabilities at no cost to developers.

GPUStack provides a standard OpenAI-compatible API, which can be quickly and smoothly integrated with various LLM ecosystem components. Wanna give it a go? Try to integrate your tools/frameworks/software with GPUStack now and share with us!

If you encounter any issues while integrating GPUStack with third parties, feel free to join GPUStack Discord Community and get support from our engineers.

Subscribe to my newsletter

Read articles from Seal directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by

Seal

Seal

Manage GPU clusters for running LLMs