Performance Analysis of JSON, Buffer / Custom Binary Protocol, Protobuf, and MessagePack for Websockets

hjkl

hjklThis article examines and compares data serialization and deserialization methods / formats: JSON, Buffers (custom binary protocol), Protobuf, and MessagePack, and offers guidance on how to implement them. (Performance benchmark at the end)

JSON

This is the most common method of sending messages is JSON. Where you encode data to a string so it could be passed through a Websocket message and parse it back.

ws.send(JSON.stringify({greeting: "Hello World"]}))

ws.on("message", (message) => {

const data = JSON.parse(message);

console.log(data)

})

Custom Binary Protocol

A custom binary protocol is a lightweight custom implementation of serializing and deserializing data. It is commonly used when speed, performance and low latency is crucial e.g. online multiplayer games and more (or if you want to optimize your app). When building a custom binary protocol, you work with buffers and binary, which might be hard to implement, however if you have knowledge of buffers and binary, it should be no problem.

const encoder = new TextEncoder();

const decoder = new TextDecoder();

function binary(text, num) {

const messageBytes = encoder.encode(text);

// array buffers cannot store strings, so you must encode

// the text first into an array of binary values

// e.g. "Hello World!" -> [72, 101, 108, 108, 111, 32, 87, 111, 114, 108, 100, 33]

const buffer = new ArrayBuffer(1 + messageBytes.length);

// when creating array buffers,

//you must predetermine the size of your array buffer

const view = new DataView(buffer);

// create a view to interact with the buffer

view.setUint8(0, num);

const byteArray = new Uint8Array(buffer);

byteArray.set(messageBytes, 1);

return buffer;

}

ws.on("open", () => {

const msg = binary("Hello World!", 123);

ws.send(msg);

})

ws.on("message", (message) => {

const buffer = message.buffer;

const view = new DataView(buffer);

const num = view.getUint8(0);

const textLength = buffer.byteLength - 1

const textBytes = new Uint8Array(buffer, 1, textLength);

const text = decoder.decode(textBytes);

console.log(text, num);

});

This function serializes two properties, one being text and another being a number into a array buffer.

Protobuf

In this code example, I use protobuf.js, a javascript implementation of protobufs. I use reflection to generate the protobuf code at runtime. You can also generate code statically, but it has no impact on performance according to the protobuf.js wiki, however it does load protobuf code faster, but that does not impact the performance at all when sending websocket messages.

syntax = "proto3";

message TestMessage {

string text = 1;

uint32 num = 2;

}

import protobuf from "protobufjs";

const ws = new Websocket("ws://localhost:3000");

ws.binaryType = "arraybuffer";

protobuf.load("testmessage.proto", function (err, root) {

if (err)

throw err;

if (root === undefined) return;

const TestMessage = root.lookupType("TestMessage")

ws.on("open", () => {

const message = TestMessage.create({text: "Hello World!", num: 12345});

const buffer = TestMessage.encode(message).finish();

ws.send(buffer);

});

ws.on("message", (msg) => {

const buffer = new Uint8Array(msg);

const data = TestMessage.decode(buffer);

console.log(data.text);

console.log(data.num);

});

})

MessagePack

import { encode, decode } from "@msgpack/msgpack";

ws.binaryType = "arraybuffer"

ws.on("open", () => {

const msg = encode({"Hello World!", 123});

ws.send(msg);

})

ws.on("message", (msg) => {

const data = decode(msg);

console.log(data);

})

Performance benchmark

To compare the performance of each data serialization formats / methods, I have written a benchmark that measures the performance when sending data over Websockets.

I have split the benchmarks into different groups.

Small data input

Medium data input

Big data input

This is to measure the performance of these data serialization over different data sizes. I have also recorded the performance of the serialization, deserialization, and total time for each group. I have ran the exact benchmarks 5 times for each groups and calculated the average to ensure reliability of these tests.

The benchmark sends Websocket messages in 100,000 iterations. The code is written in Bun.js

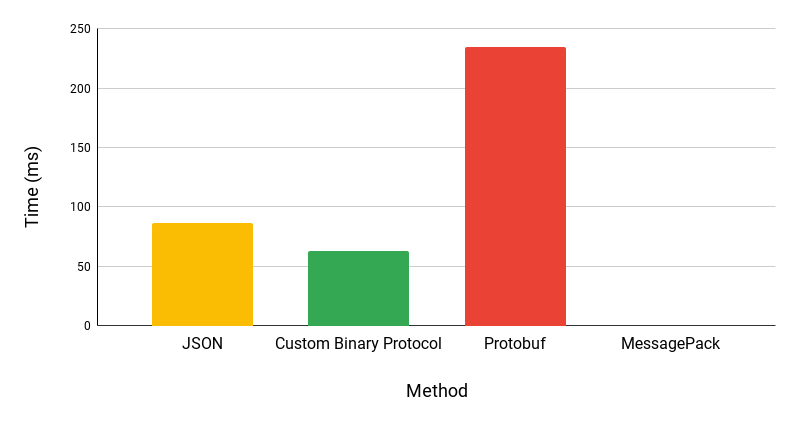

These benchmarks were recorded in time to finish (ms), so smaller is faster.

Small data benchmark

// small data input:

{text: "Hello World!", num: "123"}

Byte size of each serialization format

| Method | Byte size (bytes) |

| JSON | 33 |

| Custom Binary Protocol | 13 |

| Protobuf | 17 |

| MessagePack | 24 |

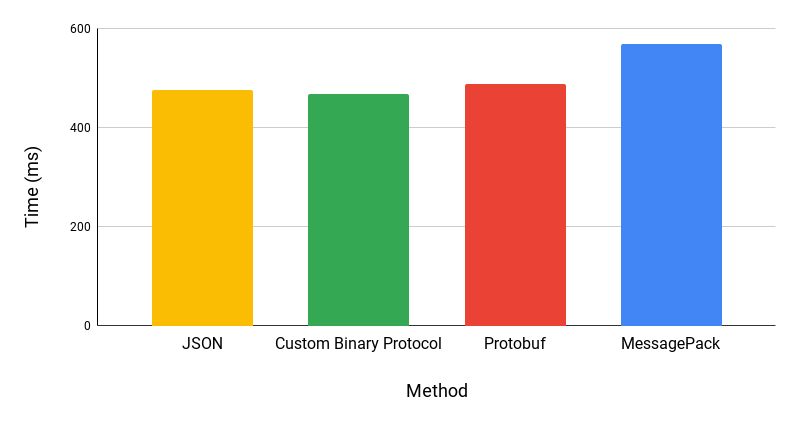

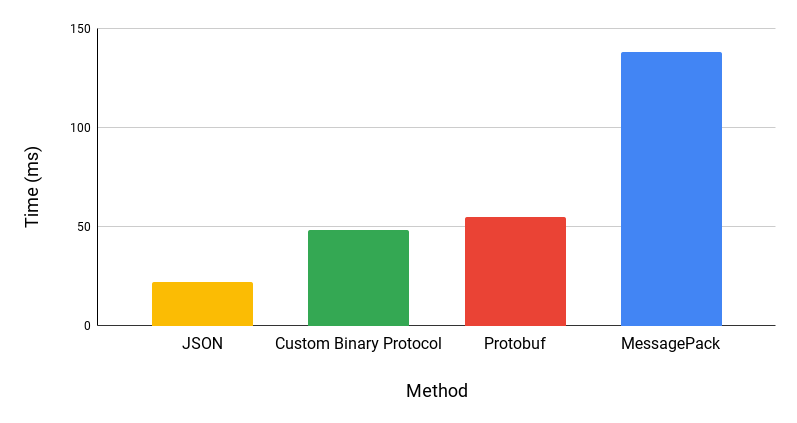

Total time (ms)

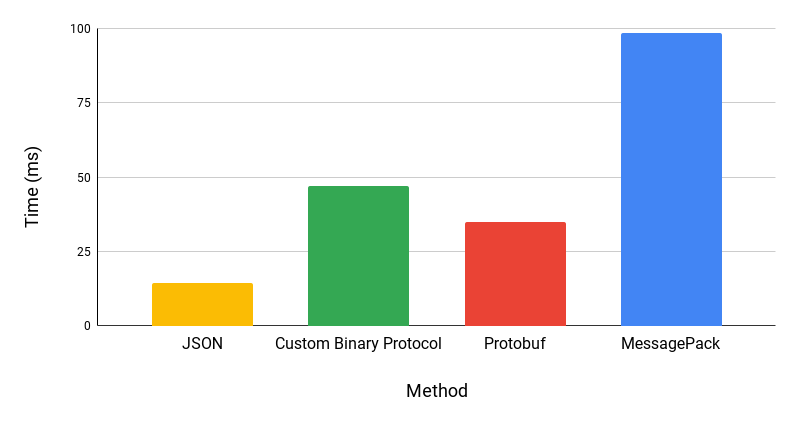

Serialization time (ms)

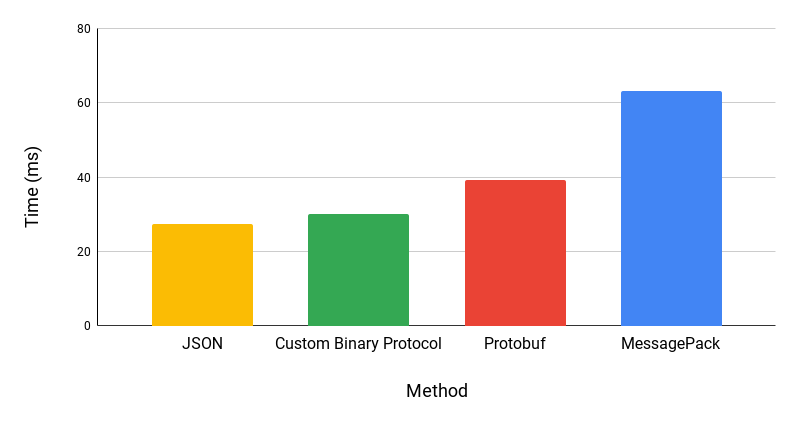

Deserialization time (ms)

Medium data benchmark

// medium data input:

{

text: "Hello World!",

text2: "Lorem ipsum dolor sit amet, consectetur adipiscing.",

num: 12345,

decimal: 3.1415926

}

Byte size of message in each serialization format

| Method | Byte size (bytes) |

| JSON | 117 |

| Custom Binary Protocol | 70 |

| Protobuf | 75 |

| MessagePack | 102 |

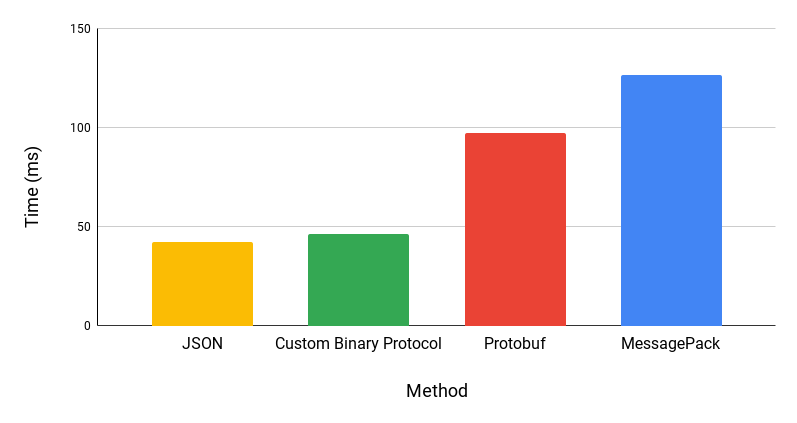

Total time (ms)

Serialization (ms)

Deserialization (ms)

Big data benchmark

// big data input:

{

text: "Hello World!",

text2: "Lorem ipsum dolor sit amet, consectetur adipiscing elit. Maecenas rutrum odio dolor, a egestas dui bibendum at.",

text3: "ABCDEFGHIJKLMNOPQRSTUVWXYZ",

text4: "The quick brown fox jumps over the lazy dog.",

num: 123456789,

decimal: 3.141592653589793,

num2: -123456789,

decimal2: -3.141592653589793

}

Byte size of message in each serialization format

| Method | Byte size (bytes) |

| JSON | 329 |

| Custom Binary Protocol | 220 |

| Protobuf | 229 |

| MessagePack | 277 |

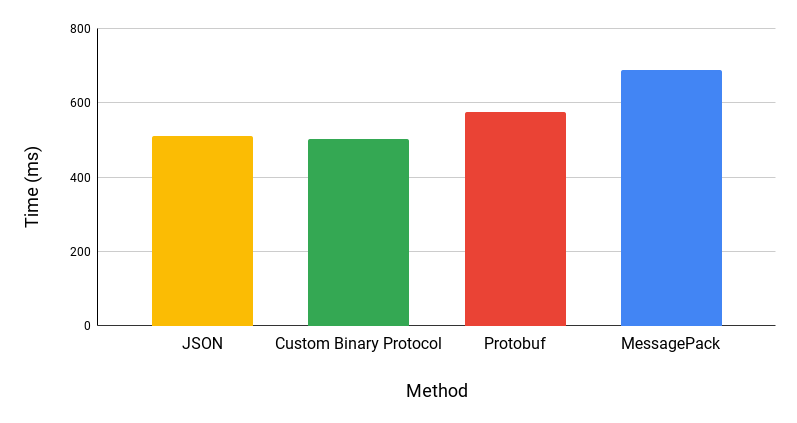

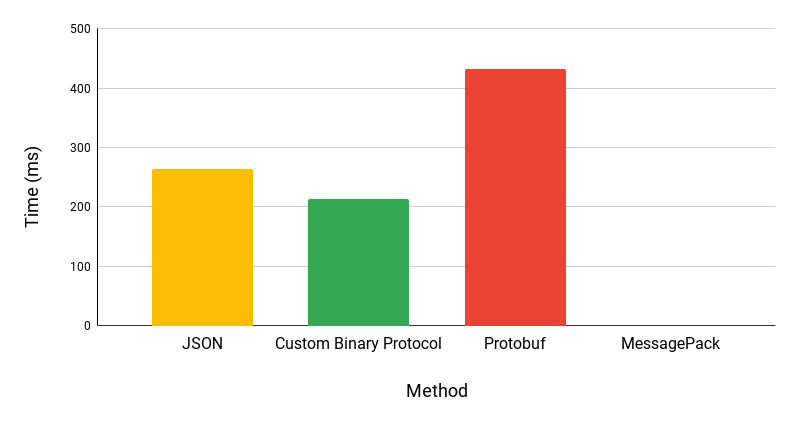

Total time (ms)

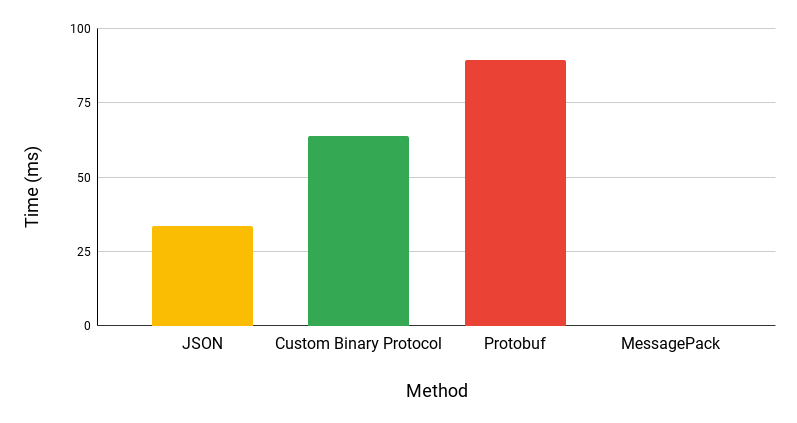

Serialization (ms)

Deserialization (ms)

MessagePack suddenly stopped working at around 6600 messages sent.

Analysis of benchmarks

In all of the benchmarks, the custom binary protocol is the fastest in the total time, and has the smallest / most efficient byte size when serializing messages. However, the performance difference are significant.

Suprisingly, JSON’s serialization time is significantly faster than serialization of the Custom Binary Protocol. This is probably because JSON.stringify() is implemented native c with Node and native zig with Bun. Results could also vary when using Node because JSON.stringify() with Bun is 3.5x faster than Node.

MessagePack could potentially be faster because in this benchmark, I used the official javascript MessagePack implementation. There are other potentially faster MessagePack implementations such as MessagePackr.

Thanks for reading!

Benchmark (written in typescript): https://github.com/nate10j/buffer-vs-json-websocket-benchmark.git

See results here in google sheets.

Subscribe to my newsletter

Read articles from hjkl directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by