Day 12 of 100 Days : Deep Dive into Docker Containers

Munilakshmi G J

Munilakshmi G J🐳 Why Are Containers So Lightweight?

Unlike VMs, which each require a full operating system, containers share the host OS kernel. This makes them incredibly lightweight, as they don't need to load separate OS layers. Containers also include only the libraries and dependencies essential for the application, reducing overhead. This efficiency allows faster startup times, less memory consumption, and quicker scaling compared to VMs.

📂 Files and Folders in Containers

Containers have their own isolated file systems that include:

Application Files: Specific code, libraries, and dependencies for the app.

Config Files: Environment configurations.

User-defined Data: This may vary based on the app's needs.

Containers can also use specific files and folders from the host OS, making it easy to access persistent data. This flexibility lets you control what each container needs and ensures your applications stay portable.

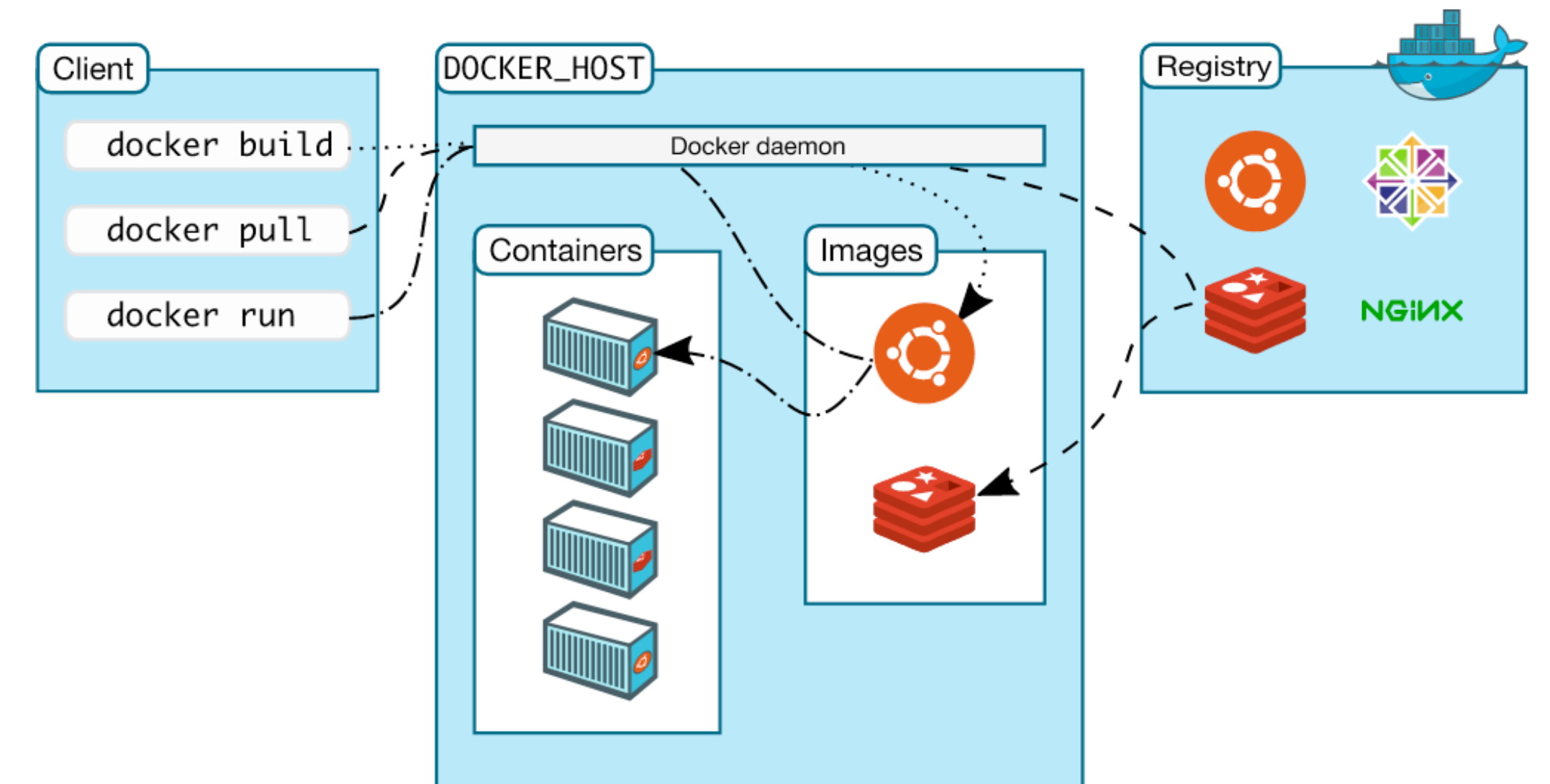

🏗 Docker Architecture

Docker's architecture is based on a client-server model. Here’s how it works:

Docker Client: Sends commands to the Docker daemon.

Docker Daemon: Builds, runs, and manages containers.

Docker Registry (Docker Hub): A central place to store and share container images.

Docker Engine: Coordinates communication between the client, daemon, and registries.

The Docker daemon handles container creation, monitoring, and deletion, while the Docker client provides an interface to interact with these containers.

🌍 Docker Hub

Docker Hub is a public registry for Docker images, allowing developers to share pre-configured environments or application setups. You can also upload custom images. To use Docker Hub, you need an account, which allows you to pull images for testing or deploy your own images for easy sharing.

🚀 Practical Section: Docker Commands

Let’s go through some basic commands that will help you navigate Docker:

List running containers:

docker psList all containers (including stopped):

docker ps -aStart a container:

docker start <container-id or name>Display Docker information:

docker infoStop a container:

docker stop <container-id or name>

Example Exercise: Run through each command above to get hands-on practice. This will give you a clear view of Docker's functionality.

🛠 Docker Installed and Running!

Once Docker is installed, you can check if everything is running smoothly with the command:

docker run hello-world

If all is well, you’ll see:

Hello from Docker!

This message shows that your installation appears to be working correctly.

📝 First Steps: Building and Running Your Own Docker Image

Clone the Docker Examples Repository

We’ll use a pre-configured GitHub repository for Docker beginners. Clone it by running:git clone https://github.com/iam-veeramalla/Docker-Zero-to-Hero cd examplesNote: I've customized this using my own Docker account credentials.

Log in to Docker

Log in to Docker Hub to push and pull images:docker loginBuild Your First Docker Image

Run this command to create an image:docker build -t muni****/my-first-docker-image:latest .Output Example:

Successfully built [image-id] Successfully tagged muni****/my-first-docker-image:latestRun Your Docker Container

Start your container to see it in action:docker run -it muni****/my-first-docker-imageOutput:

Hello WorldPush Your Image to Docker Hub

Finally, upload your image to Docker Hub:docker push muni****/my-first-docker-imageOutput:

Using default tag: latest The push refers to repository [docker.io/muni****/my-first-docker-image] latest: digest: sha256:... size: 1157

Final Thoughts

By now, you’ve built your first Docker image, created a container, and pushed the image to Docker Hub—making it ready for the world to use. Docker’s lightweight, flexible approach makes it essential for DevOps, enabling easy scaling, resource efficiency, and faster deployments.

Congratulations on a fantastic start with Docker! 🎉

Subscribe to my newsletter

Read articles from Munilakshmi G J directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by

Munilakshmi G J

Munilakshmi G J

"Aspiring DevOps Engineer on a 100-day journey to master the principles, tools, and practices of DevOps. Sharing daily insights, practical lessons, and hands-on projects to document my path from beginner to proficient. Passionate about continuous learning, automation, and bridging the gap between development and operations. Join me as I explore the world of DevOps, one day at a time!"