Qwen2.5 Chat is here

Adith Garg

Adith Garg

Qwen2.5-Max: Exploring the Intelligence of Large-scale MoE Model

Qwen2.5-Max, a large-scale MoE model that has been pretrained on over 20 trillion tokens and further post-trained with curated Supervised Fine-Tuning (SFT) and Reinforcement Learning from Human Feedback (RLHF) methodologies

Qwen Chat here - https://chat.qwenlm.ai/

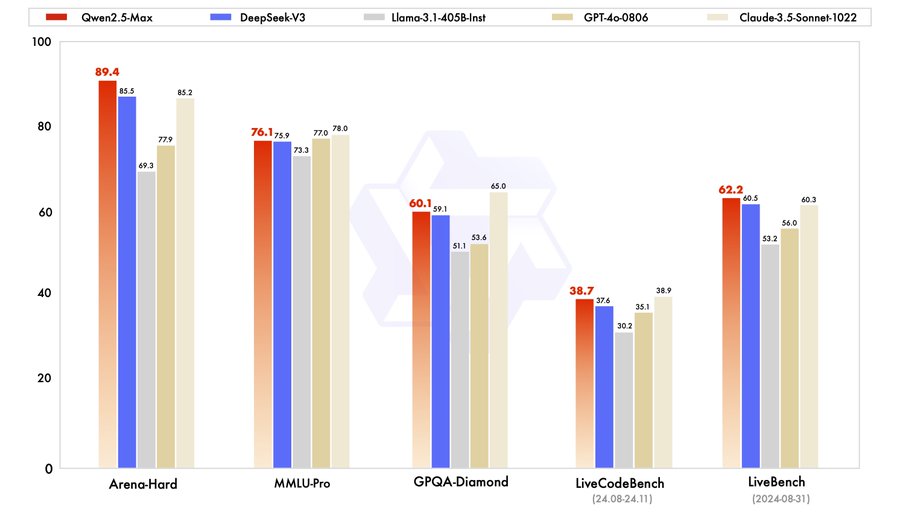

Qwen2.5-Max, a large MoE LLM pretrained on massive data and post-trained with curated SFT and RLHF recipes. It achieves competitive performance against the top-tier models, and outcompetes DeepSeek V3 in benchmarks like Arena Hard, LiveBench, LiveCodeBench, GPQA-Diamond.

📖 Blog: https://qwenlm.github.io/blog/qwen2.5-max/

💬 Qwen Chat: https://chat.qwenlm.ai (choose Qwen2.5-Max as the model)

⚙️ API: https://alibabacloud.com/help/en/model-studio/getting-started/first-api-call-to-qwen?spm=a2c63.p38356.help-menu-2400256.d_0_1_0.1f6574a72ddbKE (check the code snippet in the blog)

💻 HF Demo: https://huggingface.co/spaces/Qwen/Qwen2.5-Max-Demo

Subscribe to my newsletter

Read articles from Adith Garg directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by