Experimentation & Data Quality: The not so obvious way

Rutvik Joshi

Rutvik Joshi

This article is based on my learnings and the content from Zach Wilson’s Data Engineering YouTube bootcamp.

TLDR;

This article explores the importance of combining data metrics with storytelling to drive strategic decisions. It emphasizes the need for experimentation and data quality checks to ensure reliable insights. The piece also highlights the significance of data validation and the Airbnb MIDAS process as a gold standard for maintaining high data quality in rapidly growing environments.

Just plain old numbers with some exaggerated stories

Data without context is just “Boring”. Without a story or narrative, data is vanilla numbers and text on screen creating no impact and/or sensible derivation of actions. For each organization cares about data differently and hence the action taken on it, is a remark for the importance it holds for the org. Often founders are the ones who set the culture and your work relationship with data.

As someone who works with it constantly, we do recognize that numbers aka metrics provide the essential benchmarks and indicators that guide strategic decisions. However, it is through stories that we can effectively communicate the value of these numbers, transforming them into narratives that resonate with the drivers of change.

This combination of metrics and storytelling hence becomes crucial for understanding, driving engagement, and, enabling informed decision-making.

Metrics often = Money

Now that you have the plain old numbers, what do you do with it?

Option A: The obvious

Option B: The not so obvious

Option C: Who cares?

Option D: Data is everything for me

I hope you choose the most practical option. If not maybe experiment it out with the following steps :)

1. Define the Problem or Question - Set the Research and Hypothesis

2. Design the Experiment - Set Test vs. Control groups

3. Collect & Analyze Data

4. Interpret Results

5. Communicate Findings

6. Iterate or Refine

P.S: Keep a note on leading indicators that predict future outcomes and help in proactive decision-making, and lagging indicators that reflect past performance, providing insights into the effectiveness of actions taken.

To help refine your selection further, take special care about the quality of your data and the verifications performed on it.

## Data Quality = Trust (of your skills) + Impact (value you deliver)

I’m pretty sure “Implementing effective data quality checks crucial for ensuring reliable and accurate data pipelines” is one of the job requirements in a job posting and hence a criteria on which you will be assessed in interviews. Hence, the effectiveness of data-driven systems and decision-making processes is dependent on “You”.

Sharing a few quality checks as a refresher of the concepts:

| Quality Check | Quality Check |

| --- | --- |

| Accuracy | Consistency |

| Completeness | Format |

| Range | Uniqueness |

These checks were covered in the course based on Dimensional and Fact Modeling:

| Dimensional Modeling | Fact Modeling |

| --- | --- |

| is it growing or not? | is it season based? |

| is there a sharp rise? (if so, check) [Dim models have small % diff] | Fair for them to grow & shrink |

| Complex relationship? (better clear it out in both lives) | Have quality level & duplicate checks |

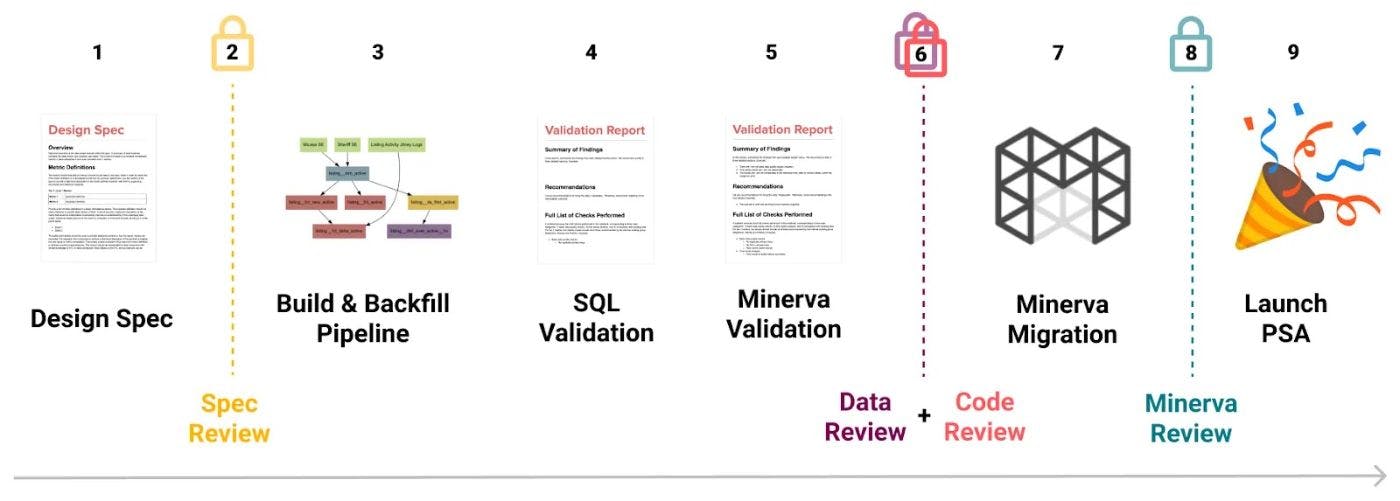

### Airbnb MIDAS Process aka the Gold standard in Data Quality

A TLDR;

It addresses the challenge of maintaining data quality amid rapid growth. The pipeline consists of four key reviews:

Spec - Defines the data model, metrics, and quality expectations.

Data - Validates data quality, completeness, and consistency against defined specs.

Code - Examines ETL code for efficiency, correctness, and adherence to best practices.

Minerva - Verifies metric definitions and ensures alignment with business objectives.

I hope you choose the most practical option. If not maybe experiment it out with the following steps :)

1. Define the Problem or Question - Set the Research and Hypothesis

2. Design the Experiment - Set Test vs. Control groups

3. Collect & Analyze Data

4. Interpret Results

5. Communicate Findings

6. Iterate or Refine

P.S: Keep a note on leading indicators that predict future outcomes and help in proactive decision-making, and lagging indicators that reflect past performance, providing insights into the effectiveness of actions taken.

To help refine your selection further, take special care about the quality of your data and the verifications performed on it.

## Data Quality = Trust (of your skills) + Impact (value you deliver)

I’m pretty sure “Implementing effective data quality checks crucial for ensuring reliable and accurate data pipelines” is one of the job requirements in a job posting and hence a criteria on which you will be assessed in interviews. Hence, the effectiveness of data-driven systems and decision-making processes is dependent on “You”.

Sharing a few quality checks as a refresher of the concepts:

| Quality Check | Quality Check |

| --- | --- |

| Accuracy | Consistency |

| Completeness | Format |

| Range | Uniqueness |

These checks were covered in the course based on Dimensional and Fact Modeling:

| Dimensional Modeling | Fact Modeling |

| --- | --- |

| is it growing or not? | is it season based? |

| is there a sharp rise? (if so, check) [Dim models have small % diff] | Fair for them to grow & shrink |

| Complex relationship? (better clear it out in both lives) | Have quality level & duplicate checks |

### Airbnb MIDAS Process aka the Gold standard in Data Quality

A TLDR;

It addresses the challenge of maintaining data quality amid rapid growth. The pipeline consists of four key reviews:

Spec - Defines the data model, metrics, and quality expectations.

Data - Validates data quality, completeness, and consistency against defined specs.

Code - Examines ETL code for efficiency, correctness, and adherence to best practices.

Minerva - Verifies metric definitions and ensures alignment with business objectives.

Brilliant engineers have already written about it. I won’t repeat them and hence redirect you to 2 good reads:

Data Validation and the concerns of ugly data

We covered about Metrics + Storytelling, the experimentation approach with data along the guidelines of quality checks and the gold standard of all data quality score. Lastly, what use is the story or numbers if the data itself is inaccurate or unreliable?

Validation ensures the information being used for analysis and decision-making is both accurate and trustworthy. Without which, data can become "ugly," it is riddled with errors, inconsistencies, or incomplete entries leading to misguided conclusions and poor business directions.

Here is summed up approach for best practices and highlighted concerns with ugly data, they are independent of each other:

| Validation Best Practices | Ugly data concern |

| Use Backfilling approach (~approx. a month of data) | Logging + Snapshotting errors |

| Check your assumptions | Production data quality |

| Produce a validation report of: duplicates, nulls, violation of business rules, time series/volumetric data | Schema evolution |

| Pipeline mistakes | |

| Not enough validation |

Questions to ask

Use the below questions as a reference, the next time you think about Experimentation & Data Quality:

How can we ensure the data we are using is accurate and reliable for our analysis and decision-making processes?

What methods or experiments can we implement to validate our data and derive meaningful insights?

How do we maintain and improve data quality to support effective storytelling and strategic decision-making?

I appreciate your time reading through this. This marks the end of bootcamp learning series. I plan to share more on Business Intelligence soon. Stay curious!

Subscribe to my newsletter

Read articles from Rutvik Joshi directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by

Rutvik Joshi

Rutvik Joshi

I seek to create experiences. Currently doing it with, data, design and a blend of analytics.