Skillet: Our AI Agent SRE

Praneet Sharma

Praneet Sharma

Today, we’re excited to give a brief preview of Skillet, our upcoming AI Agent SRE product feature, and share our thoughts and some early findings from its development. We’ve been developing Skillet with collaboration from key design partners, and we’d love to explore your ideas too.

Get in touch at info@omlet.co, our website contact form, or leave a comment!

Approach

One of the amazing benefits of the OpenTelemetry semantic conventions is the solid data platform it unlocks. With this great data foundation, we can creatively approach integrating AI and LLMs. As discussed in one of the previous posts, however, it is extremely tempting to look at Observability AI Agents from a “greedy” perspective. In that post, we mentioned that reliance on only “top-down” AI systems can miss crucial long-tail problems and disregard important information. Very quickly, this aproach devolves into a rules-based approach. At Omlet, we wanted to take a different, more straight-forward approach to incorporating a powerful, lightweight AI agent into the observability landscape.

Observability is an “Always-on” endeavor

Observability practices, and the automation aligned to it, do not have the comfort of being active only sometimes. In order to be maximize effectiveness, observability is required to be always active and all encompassing. Practitioners are often forced into taking a “collect everything” mentality, which can result in massive increases in cost and noise. Fortunately, there’s a better way.

Bottom-up: AI in the pipeline, at the “Edge”

A core principle of Observability is the concept of “entities”. This could be reflected, oftentimes, as a “Service”, “Host”, “Pod”, etc. These cohorts unpack grouping methodologies that can be further supplemented by “Version”, “Release”, “Experiment”, etc. We can use these groupings as opportunities to examine issues in isolated groups. This covers a few giant pain points:

Making sense of large volumes of data in a methodical way

Allowing “top-down” methodologies (AI or Human) to focus on larger impact of interacting parts, not why or how the parts are breaking. Humans spend too much time focusing on individual entities currently, resulting in high cognitive fatigue.

Efficient “Always-on” AI

You’re probably wondering: wouldn’t event data structures (logs / spans), and their associated volume of tokens, lead to a cost/noise explosion within LLMs? We attacked this problem in three parts, specifically for LLM architectures:

Better Entity Based Grouping

Clustering and Masking

Selective extraction on Span Data Structures

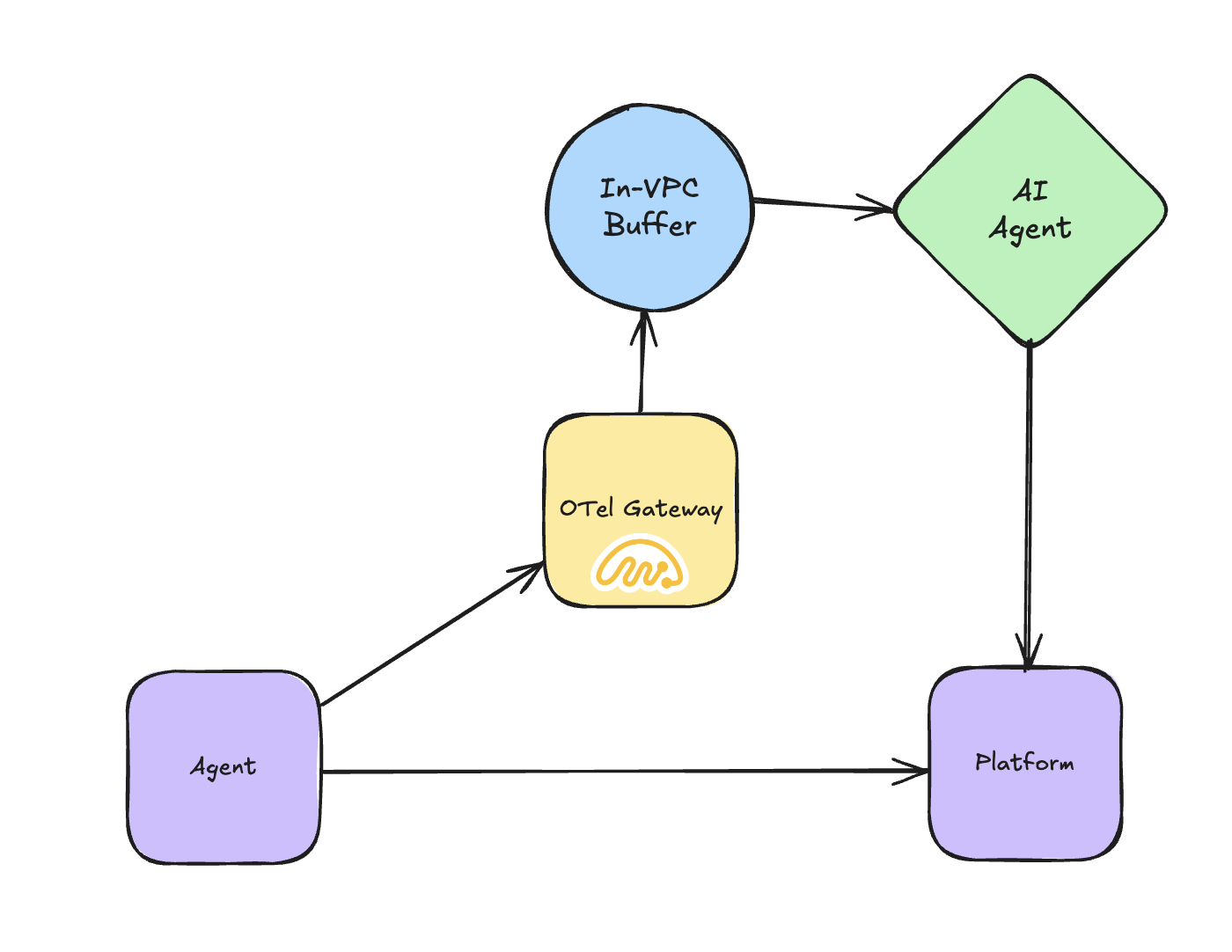

What allowed these techniques in the first place? A plug-and-play data platform built on OTel. OTel semantic conventions are very well structured, which makes the data engineering and formatting task a breeze. We had to also consider carefully: Should we build yet another pane of glass for this type of view, or, could we benefit from the interoperability of OTel and bring the intelligence to the existing platform views? We chose the latter.

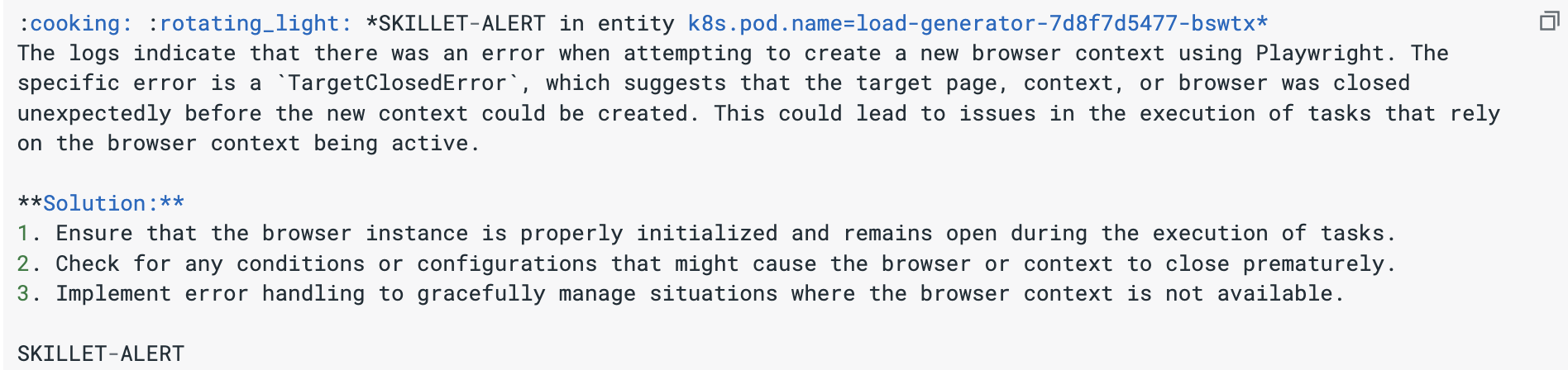

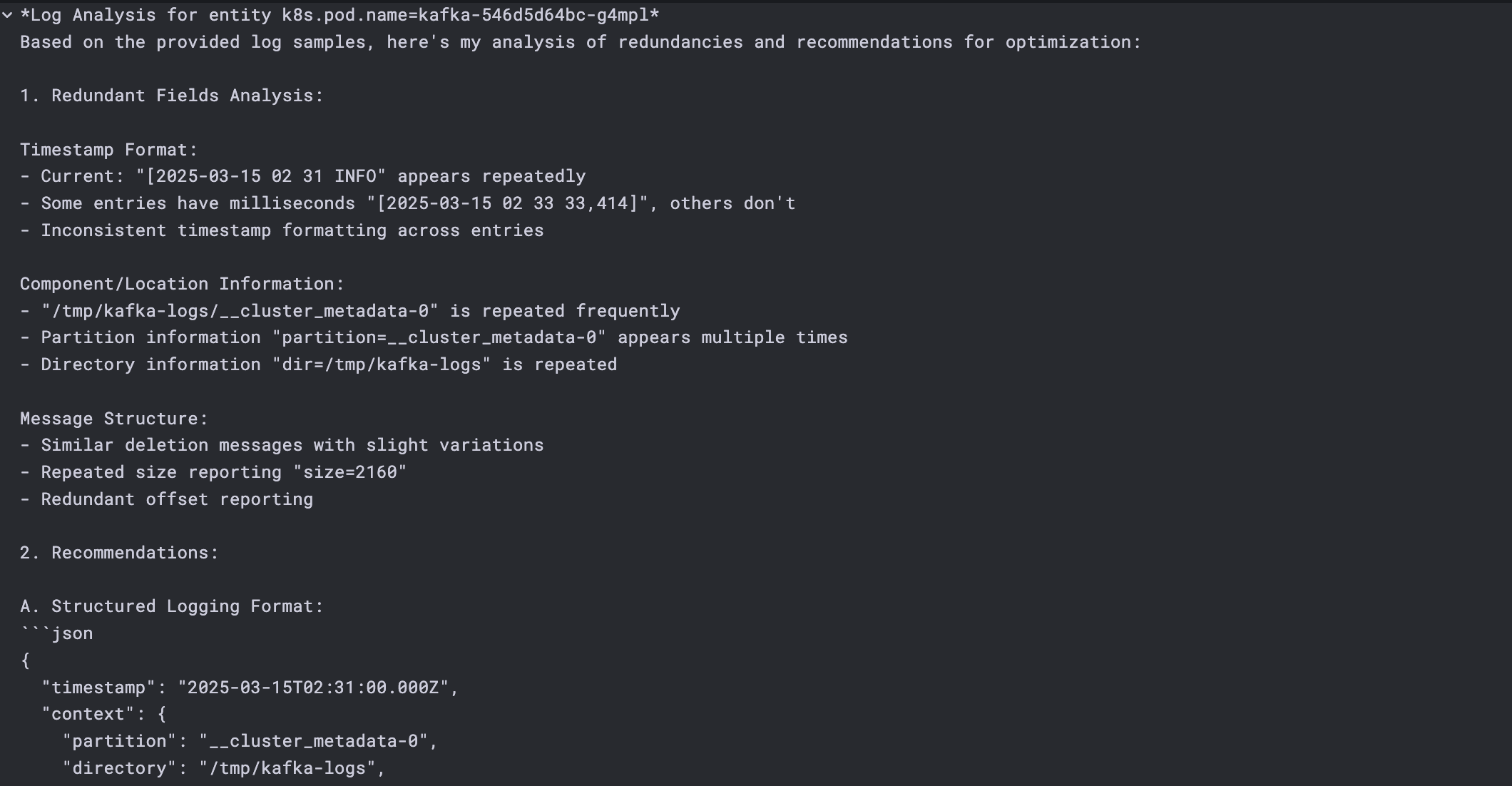

Dynamic Incident Analysis and Log Quality

Our early experiments showed exciting results across specific OpenAI (gpt4o-mini) calls. As we expanded the capabilities, we proceeded to add other models, like Claude, Llama, Mistral, and now 100s of others via “bring-you-own-LLM”. Some of the examples:

What’s Next?

Unsurprisingly, observability use-cases aren’t limited to log or span incident analysis. Over the past months, we have been delighted to explore a diverse range of powerful use-cases presented by design partners working with us to develop Skillet:

Log Line Reduction Recommendation

Log Quality Analysis and Scoring

Complex Sensitive Data Analysis

Performance Optimization Opportunities

SQL Query Optimization

Key Points in Metrics Data

As an always-on AI agent, Skillet represents a massive leap forward in Omlet’s capacity to simplify and amplify your observability workflows. This is a feature still in active development, and we’re eager to continue exploring customer and partner-led ideas on how we can hone on in other use cases with this data (hint: security). Contact us at info@omlet.co and let’s get cooking!

Subscribe to my newsletter

Read articles from Praneet Sharma directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by