How to Easily Set Up and Use LLMs on Your Local Machine with Ollama

Ankit saha

Ankit saha1 min read

Installation

For macOS & Windows

- Download the official installer from the Ollama website.

Launch the installer and adhere to the setup instructions.

Windows

After installation, open PowerShell or Command Prompt and verify it using:

ollama

Linux

Open a terminal window.

Run the following command:

curl -fsSL https://ollama.com/install.sh | sh

Once the installation is complete, verify it by running:

ollama

or

Paste the link http://127.0.0.1:11434 into your browser. If it shows "Ollama running," then Ollama is set up.

Running Ollama

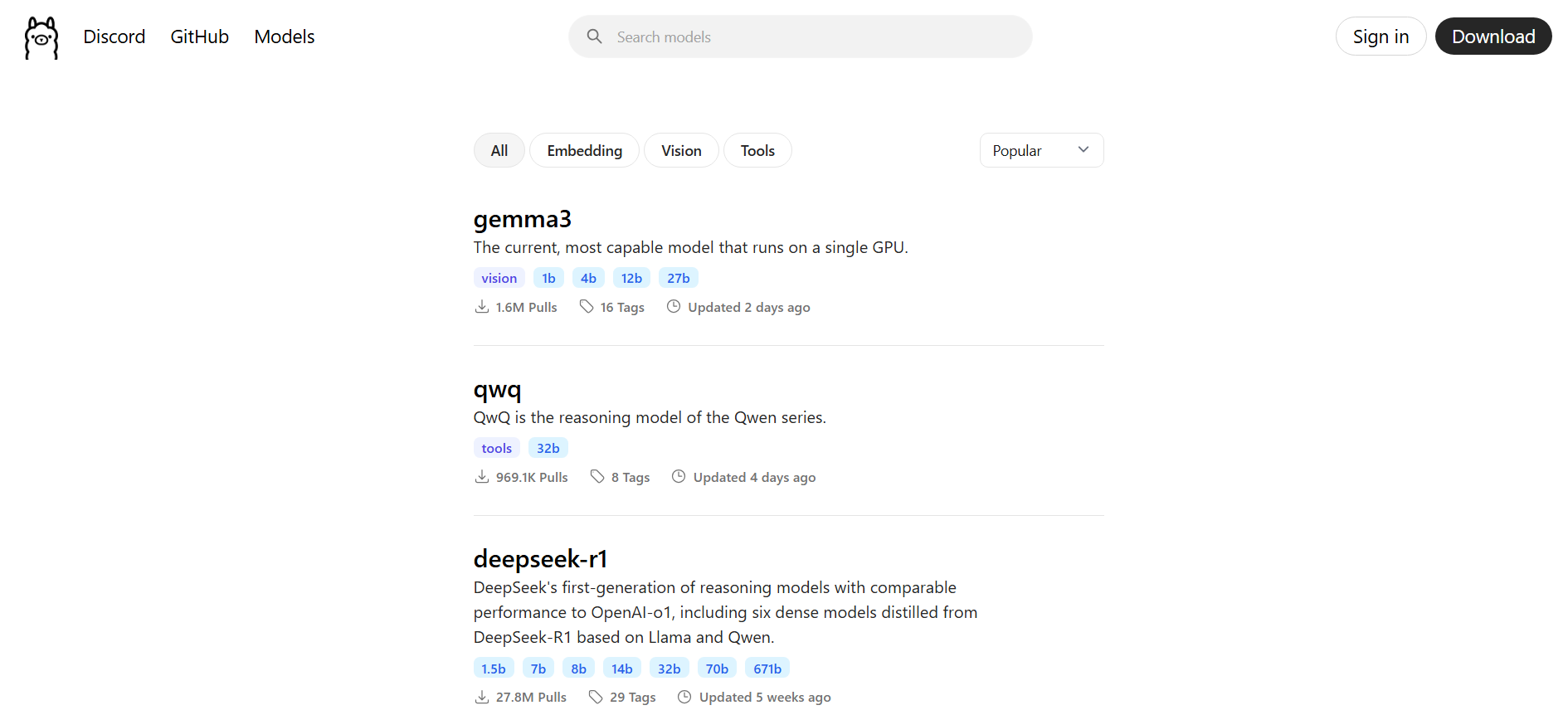

- Choose the model you wish to use from the Ollama models page.

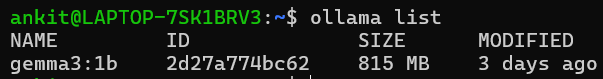

- View all models install in Ollama.

ollama list

- Pull and run the selected model.

ollama run gemma3:1b

Gemma3:1b is a small and fast model best for beignner

If you do everything correctly you will have something like this

0

Subscribe to my newsletter

Read articles from Ankit saha directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by