All you need about MCP(+ Spotify Podcast)

Ali Pala

Ali Pala

In the fast-changing world of artificial intelligence (AI), the Model Context Protocol (mCP) is an important new development that makes it easier to connect AI applications with different data sources. Created by Mahes from the applied AI team at Anthropoc, it focuses on a key idea: models are only as good as the context we give them. This discussion looks at the ideas behind mCP, why it matters in AI development, and how it aims to standardize how AI applications work with other systems.

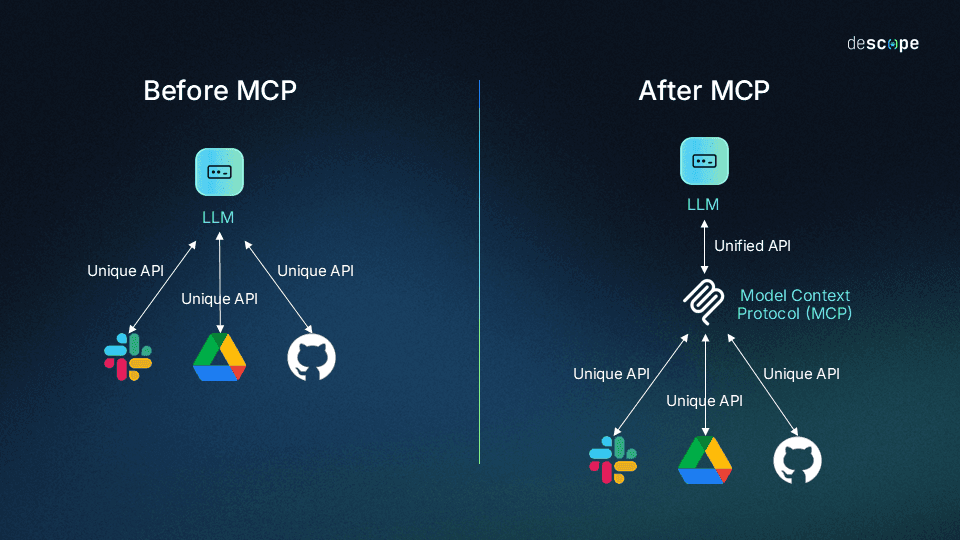

MCP is like the Type-C USB of AI systems—a universal connector that works everywhere. Just as Type-C USB replaced a confusing array of charging cables with one standard that fits all devices, the Model Context Protocol (MCP) replaces the need for custom-built integrations between AI systems and data sources.

To listen to the blog and more, please visit the episode: https://spotifycreators-web.app.link/e/G8e6xRydURb https://spotifycreators-web.app.link/e/G8e6xRydURb

What is MCP(For Tech People)?

Model Context Protocol (MCP) is a framework designed to improve AI systems by giving them structured access to external context and tools. It provides a standardized way for AI models to request and receive information from various sources, tools, and services while keeping a smooth conversation flow with users.

MCP serves as a middle layer between AI models, like large language models (LLMs), and external resources. It enables models to identify when they need more information, request it through a structured protocol, and then integrate the results into their reasoning process.

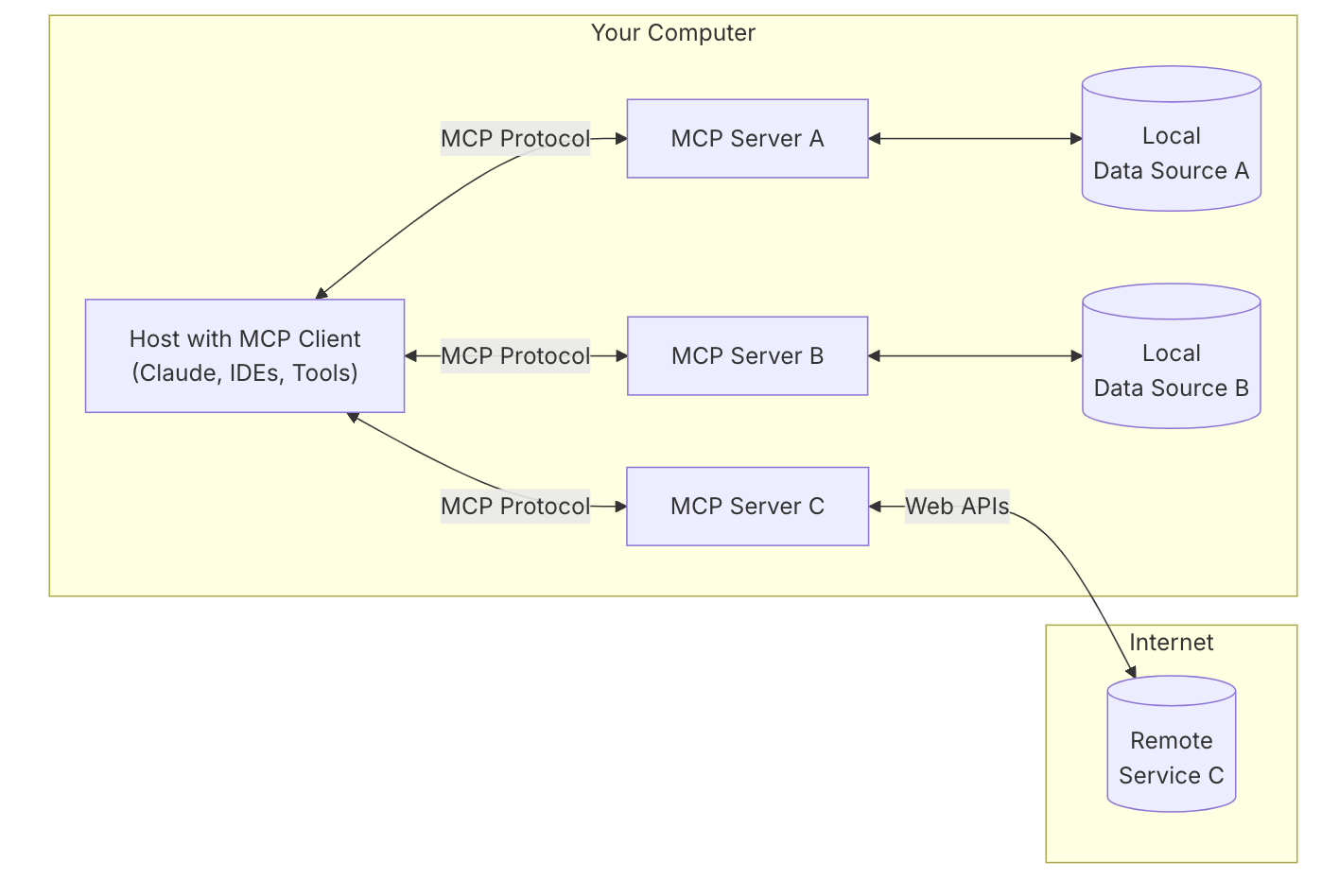

The Model Context Protocol is an open standard that allows developers to create secure, two-way connections between their data sources and AI-powered tools. The setup is simple: developers can either make their data available through MCP servers or develop AI applications (MCP clients) that connect to these servers.

Analogy for Non Tech People(A Banking Example)

Think of MCP like updating a traditional bank that used to have rigid systems and limited customer service options. In the old setup, when a customer asked about a mortgage, the loan officer only knew the standard products and rates they were trained on and could only access the specific system for mortgage applications. If a customer had a complex financial situation that required looking at their investment portfolio, deposit history, and credit score together, the loan officer would say, "You'll need to speak with three different departments." After adopting an MCP-like approach, the bank's customer service representatives now have a standardized way to access all relevant financial systems.

When a customer asks about personalized financial options, the representative follows a consistent protocol that smoothly checks the mortgage system, investment records, credit bureau data, and current market rates. The customer enjoys a smooth conversation while getting truly personalized recommendations based on their complete financial picture. When the bank introduces new financial products or connects with additional payment networks, they don't need to retrain every employee—they simply connect these new services to the existing protocol, and representatives can immediately offer these options to customers without disruption or extensive retraining.

What is the motivation behind developing MCP?

The primary motivation behind MCP is the recognition that the quality and relevance of an AI model's output are heavily dependent on the context it receives. Previously, integrating context into AI applications was often ad-hoc and fragmented. MCP aims to standardize this process, drawing inspiration from successful protocols like HTTP for web applications and LSP for IDEs. By creating a common language for AI applications to interact with data sources and tools, MCP seeks to reduce development complexity, improve interoperability, and ultimately lead to more powerful and personalized AI experiences.

Here’s a detailed list of problems addressed by the Model Context Protocol (mCP) along with their respective solutions:

Fragmentation of Context Input:

Problem: Prior to mCP, AI models often lacked standardized methods for contextual data input, leading to inconsistent outputs and performance across various applications.

Solution: mCP establishes a uniform protocol for delivering context to AI models from diverse data sources, ensuring a more reliable and coherent model performance.

Inefficient Integration Methods:

Problem: Many organizations utilized disparate methods to integrate AI with tools and data sources, often requiring custom implementations that were time-consuming and error-prone.

Solution: mCP standardizes integration processes, enabling seamless connections between AI applications and external systems, thus reducing development time and minimizing errors.

Duplicative Development Efforts:

Problem: Different teams within the same organization often created their custom solutions for interfacing with AI systems, leading to redundancy and lack of collaboration.

Solution: By providing a centralized standard, mCP encourages team collaboration by allowing multiple applications to easily connect to shared resources and tools without re-inventing the wheel.

Lack of Dynamic Tool Usage:

Problem: Prior AI frameworks struggled with dynamically adapting to new tools or resources, requiring extensive pre-planning and coding.

Solution: mCP facilitates an environment where AI applications can dynamically discover and integrate new tools as needed, allowing for real-time adaptability and expanding the capabilities of AI agents.

Complexity of Context Management:

Problem: Managing context effectively for AI applications can be complicated, as developers needed to implement various strategies to provide context and maintain relevance during operations.

Solution: mCP provides a structured approach to manage and utilize context, allowing for richer, more relevant interactions between AI agents and their users by establishing clear pathways for context retrieval and utilization.

Limited Interoperability:

Problem: Various AI applications often faced compatibility issues when trying to work with external services, resulting in siloed functionalities.

Solution: mCP enhances interoperability by creating a standard interface through which AI applications can invoke tools and resources, regardless of their underlying architecture, thus broadening their usability and impact.

These problems collectively illustrate the challenges faced by developers and organizations in the evolving landscape of AI development. mCP’s standardized approach not only simplifies integration and enhances context management but also promotes a collaborative, adaptable environment that can evolve alongside users' needs and technological advancements.

How does MCP works?

The Model Context Protocol (mCP) enables efficient interactions between AI applications, servers, and external data sources like databases and web APIs. It serves as a middleware layer connecting Large Language Models (LLMs) with external systems, addressing the challenge of managing context flow between the model and its environment.

What are the core components of the Model Context Protocol?

The Model Context Protocol consists of three primary interfaces for interaction between AI applications (clients) and external systems (servers):

Prompts: Predefined, reusable templates for common user-initiated interactions with a server. They allow users to invoke complex actions or queries with simple commands, with the server interpolating necessary context before sending the full prompt to the LLM.

Tools: Model-controlled functions exposed by a server that an LLM within an MCP client can choose to invoke to retrieve data, perform actions, or interact with external systems. The server defines the tool's functionality and description, allowing the model to intelligently decide when and how to use it.

Resources: Data exposed by a server to an MCP client in an application-controlled manner. These can be static or dynamic and offer a rich interface beyond simple text exchange, such as files, images, or structured data. The client application decides how and when to utilize these resources, which can be presented to the user or automatically provided to the model.

1. Architecture Overview:

mCP employs a modular architecture consisting of clients, servers, and an array of external data sources. Clients are the AI applications that utilize models to interact with users, while servers wrap around various tools and data resources. This separation allows for a clear standard for communication.

2. Client-Server Interaction:

Client Role: A client requests services from the server. Clients send commands to the server using defined mCP protocols, which include requests to invoke tools or query resources.

Server Response: The server processes these requests by using predefined logic to determine how to interact with the appropriate tools or databases. It then sends back the necessary data or confirmation of actions taken.

3. Internal and External Data Sources:

Connecting to Databases: The server can interface with databases via tools specified in the mCP framework. For instance, it might use SQL queries executed against a relational database. It accepts input from the client through specified resources that represent data formats or structures (like JSON), executes queries, retrieves data, and returns it to the client.

Using Web APIs: The server can also connect to external web APIs. When a client requests information from a service like a payment gateway or a weather API, the server formulates an appropriate API request based on the mCP tool definitions (which might include API endpoints and expected parameters). The server communicates with the external API, retrieves the data, and sends it back to the client.

4. Dynamic Tool Invocation:

One of the powerful features of mCP is its ability to dynamically discover tools. The client can inquire about available tools or resources through the mCP tool registry. When new tools (like an updated web API or a new database connection) are added, clients can automatically adapt by querying the server for this updated list.

5. Data Context Management:

mCP emphasizes the contextual awareness of AI models. Clients can include context in their requests by appending specific parameters that inform the server about the user’s current state or ongoing tasks. The server uses this information to fetch relevant data from internal or external sources, ensuring that the outputs provided to the client are contextually accurate.

6. Notifications and Event Handling:

In more advanced use cases, servers can send notifications to clients when specific events occur, like updates in databases or changes in external APIs. This proactive communication allows clients to stay informed without needing to poll the server for updates continuously.

7. Transport Mechanisms:

For communication between clients and servers, mCP supports various transport layers, including local connections (via standard input/output) and remote communications over protocols like WebSockets or HTTP. This flexibility enables efficient handling of requests and responses in real-time.

Several AI applications and platforms have already integrated or are in the process of integrating the Model Context Protocol (MCP) since its introduction by Anthropic in late 2024. Here's a comprehensive list of AI applications currently using or planning to use MCP:

Where to Use Model Context Protocol?

AI Coding Assistants

Sourcegraph Cody: Implements MCP through OpenCTX, allowing it to access code intelligence and provide more accurate coding suggestions

GitHub Copilot: While not explicitly mentioned, it's likely exploring MCP integration to enhance its code context understanding

Replit's AI: Integrating MCP to improve its coding assistance capabilities

Codeium: Adopting MCP to enhance its AI-powered coding features

Roo Code: Enables AI coding assistance via MCP, supporting MCP tools and resources

Enterprise AI Tools

Claude Desktop App: Anthropic's own application, utilizing MCP to access local files and services securely

LibreChat: An open-source AI chat UI that now includes MCP integration for extending its tool ecosystem.

Microsoft Copilot Studio: Recently announced MCP integration to simplify the addition of AI apps and agents

Development and Data Tools

Zed: Integrating MCP into their development platform

AI2SQL: Uses MCP to generate SQL queries from natural language prompts

Apify: Developed an MCP server allowing AI agents to access Apify Actors for data extraction and web searches

AI Frameworks and Clients

mcp-agent: A framework for building agents using the Model Context Protocol

oterm: A terminal client for Ollama that supports MCP tools

Enterprise Systems (MCP Servers in Development)

While not AI applications themselves, these platforms are developing MCP servers to allow AI integration:

Google Drive: For document search and retrieval

Slack: For messaging and knowledge access in channels

GitHub: For code repository interactions

Postgres: For database querying

Puppeteer: For web browsing and scraping capabilities

What are some of the future developments and areas of focus for the Model Context Protocol?

Remote Servers and Off: Fully supporting remotely hosted servers with built-in authentication and authorization mechanisms (like OAuth 2.0) to enable wider accessibility and easier deployment of MCP servers.

Stateful vs. Stateless Connections: Exploring options for more short-lived connections to better support various use cases and improve efficiency.

Streaming: Enabling the efficient transfer of large or continuous data streams between clients and servers.

Namespacing: Implementing mechanisms to prevent naming conflicts between tools from different servers and to facilitate better organization of capabilities.

Proactive Server Behavior/Elicitation: Enhancing the protocol to allow servers to more proactively interact with clients, such as initiating requests for more information or providing notifications based on events.

Conclusion:

The Model Context Protocol (MCP) offers a standardized method for LLMs to connect with external data sources and tools, acting like a "universal remote" for AI apps. Released by Anthropic as an open-source protocol, MCP enhances existing function calling by removing the need for custom integration between LLMs and other apps. This allows developers to create more capable, context-aware applications without having to start from scratch for each combination of AI model and external system.

By integrating these components through a well-defined protocol, mCP establishes a robust framework for AI applications to interact with various tools and data sources. Its modular architecture allows for seamless scalability, adaptability, and responsiveness, making it suitable for a wide range of applications and infrastructures. The result is a highly functional system that can manage complex processes and provide rich, context-aware experiences for end users.

References

Subscribe to my newsletter

Read articles from Ali Pala directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by