2. Understanding Key Concepts and Terminology (Part 1)

Muhammad Fahad Bashir

Muhammad Fahad Bashir

Second Article of the series: Deep Learning Essentials

What is Learning?

Learning is a fundamental concept that applies to both humans and machines. It involves acquiring knowledge or skills through experience, study, or instruction.

For humans, learning is a continuous process that begins at birth, where we absorb information from our surroundings, parents, and schools. Similarly, in the realm of artificial intelligence, we aim to replicate this capability in machines, enabling them to learn from data and experiences.

When we think about learning in machines, we must consider not just how they acquire information but also how they process it to make decisions. This leads us to the concept of a digital brain, which integrates software, hardware, algorithms, and data to create systems capable of learning and adapting.

The Connection Between Artificial Intelligence and Learning

Artificial Intelligence (AI) is a broad domain that encompasses various subfields, including machine learning, which specifically focuses on systems that learn from previous data. Within AI, we have different types of algorithms, including rule-based systems and expert systems, as well as those that learn from data.

Machine learning is essentially a subset of AI, dedicated to developing algorithms that improve their performance as they are exposed to more data. It is essential to understand that while all machine learning is a part of AI, not all AI is machine learning. This distinction is crucial as we delve deeper into the realm of deep learning.

Deep Learning: A Subset of Machine Learning

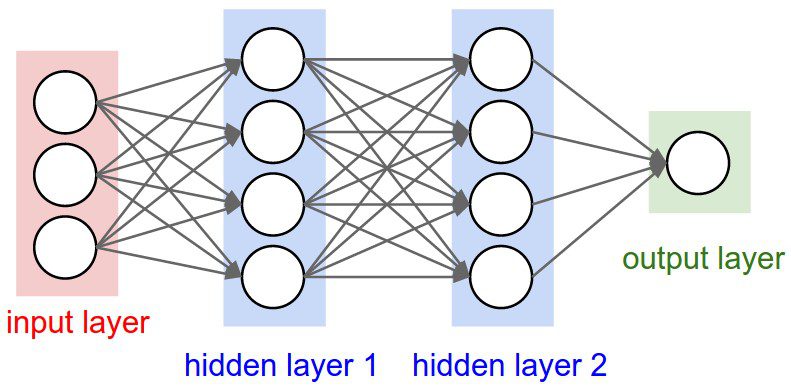

Deep learning is a specialized field within machine learning that focuses on algorithms based on neural networks. These networks are inspired by the biological neural networks in our brains, consisting of interconnected nodes (neurons) that work together to process information.

Neural networks can learn complex patterns and representations from large amounts of data. The key difference between traditional machine learning and deep learning lies in the depth of the networks. Deep learning utilizes multiple layers of neurons, allowing for more sophisticated learning and representation capabilities.

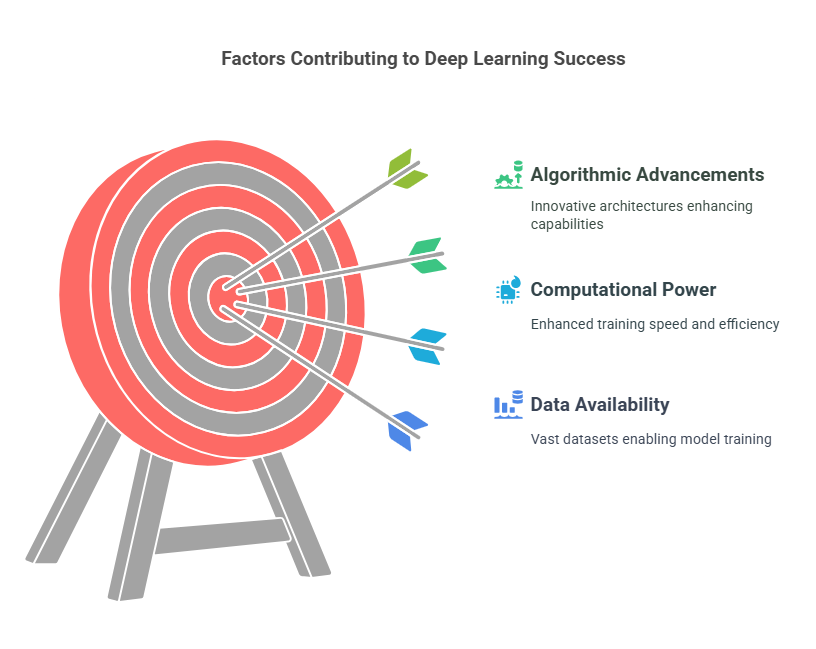

The Rise & Effectiveness of Deep Learning

One of the critical factors contributing to the rise and effectiveness of deep learning is the

Availability of vast amounts of data: In the past, limited data restricted the potential of machine learning algorithms. However, in today's digital age, we generate terabytes of data every second, providing rich resources for training deep learning models.

Computational Power: Alongside data availability, advancements in computational power have significantly impacted deep learning. In the past, training neural networks required substantial time and resources. With the advent of supercomputers and Graphics Processing Units (GPUs), training deep learning models has become faster and more efficient.

Algorithmic Advancements: The last major factor driving the success of deep learning is the significant advancement in algorithms. Over the past few years, we have seen the emergence of innovative neural network architectures, such as Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Transformer networks.

Understanding Neural Networks

At the core of deep learning is the neural network, which consists of interconnected nodes (neurons) that process information. Each neuron receives input, applies a function, and produces an output. The strength of the connections (weights) between neurons determines how information flows through the network.

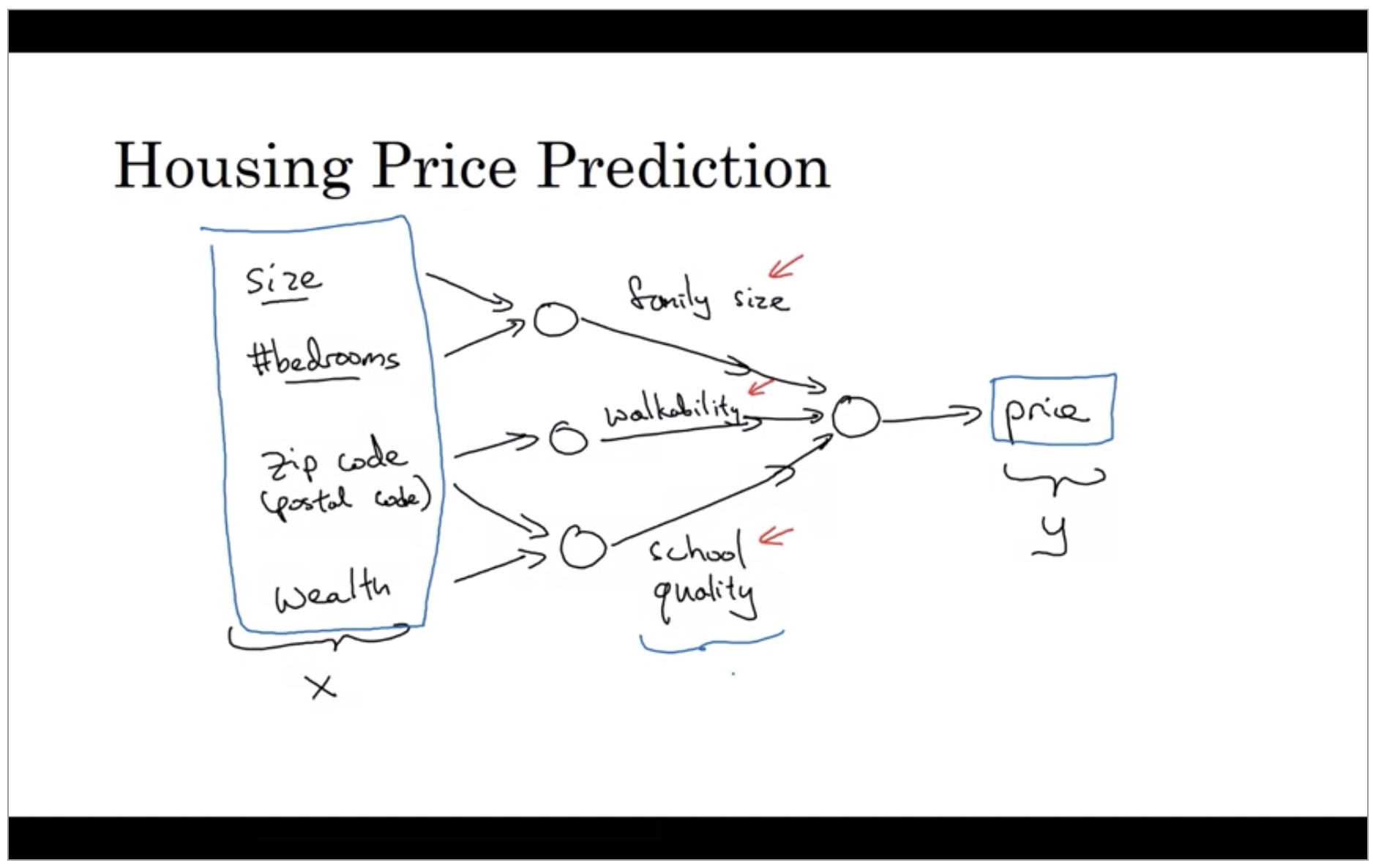

To illustrate, let's consider a simple problem: predicting house prices based on various features such as area, number of bedrooms, and location. In this scenario, we can represent the relationship between these features and the price as a neural network. Each input feature corresponds to a neuron in the input layer, which feeds into one or more hidden layers that process the information before producing an output (the predicted price).

Types of Neural Network Architectures

Standard Neural Networks: These are the simplest form of neural networks, suitable for basic tasks.

Convolutional Neural Networks (CNNs): Designed for processing visual data, CNNs excel in image and video analysis.

Recurrent Neural Networks (RNNs): Ideal for sequential data, RNNs are commonly used in natural language processing and time-series forecasting.

Autoencoders: Used for unsupervised learning tasks, autoencoders learn efficient representations of data.

Transformer Networks: A breakthrough architecture that has revolutionized natural language processing, enabling models like GPT and BERT.

There are several types of neural network architectures, each suited for specific tasks:

Conclusion

In this, we have explored the foundational aspects of deep learning, including the relationship between machine learning and artificial intelligence, the significance of data and computational power, and the various neural network architectures. Understanding these concepts is essential for anyone looking to delve deeper into the world of deep learning and its applications.

Stay us together for learning more about Deep Learning .

Subscribe to my newsletter

Read articles from Muhammad Fahad Bashir directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by