Generative AI fundamentals

Khushi Singh

Khushi SinghWhat is Gen AI?

Non-Generative AI: Here no new content is generated. The content already exist and the model just learns from the data to give output. Example: Credit card fraud detection,salary predictor

Generative AI: Here the data is generated.It is the category of AI which associates with generating content. Example:-ChatGPT

Gen AI evolution

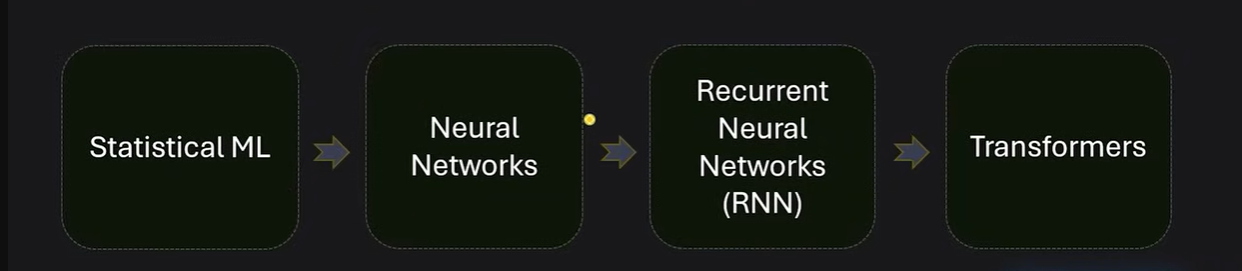

Statistical model: Dealing with simple features for prediction.

Neural networks: This is deep learning where the features were complex.This was introduced when understanding of image data for the model became necessary.Since image data has more complex features(Rotated image or inverted image) , identifying features was tought, thus it gave rise to nueral network

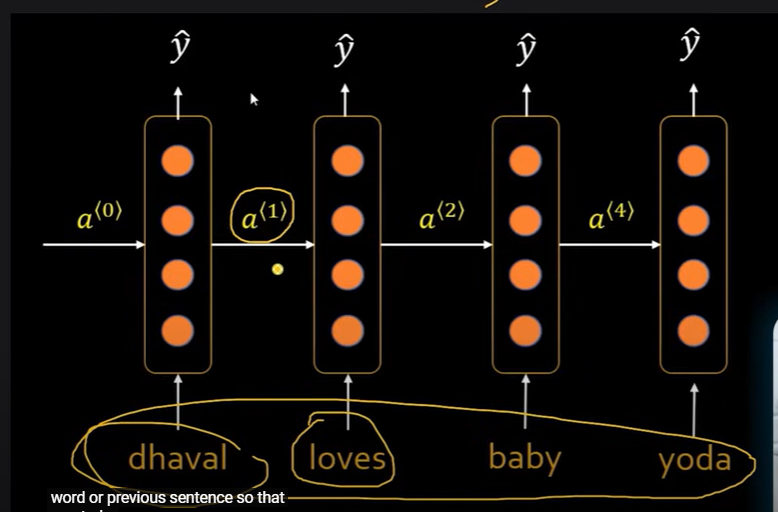

RNN: These are sequential models. Here the model learns each word , word by word. This gave rise to language translators.Language translators work the same way.They translate each word sequentially. based on the last word.

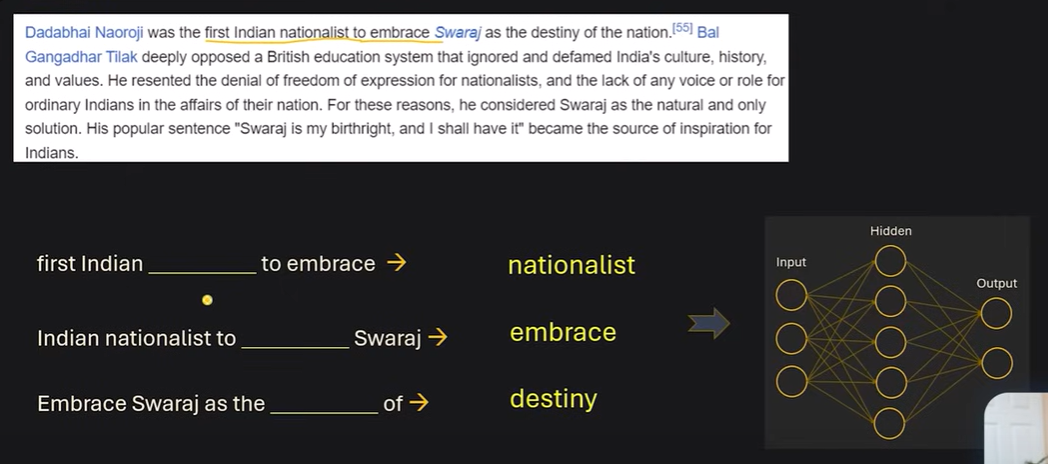

Gen AI: Generating content from incomplete sentences. such as in emails for auto complete.this auto complete happens by predicting the probability of the next word. This is how a Language model works.

Language model:- it is an AI model that can predict the next word( or set of words) for a sequences of words.

Here we do not need labeled data. Learning happens by generating the training pairs from the given context.This type of learning is called Self-Supervised learning.Here the neural network to which these training pairs are fed is very complex.

When these neurons are increased in the networks , it becomes large language model LLM.

GPT-4 has 175 billion parameters.

Transformers was introduced after the book “Attention is all you need”

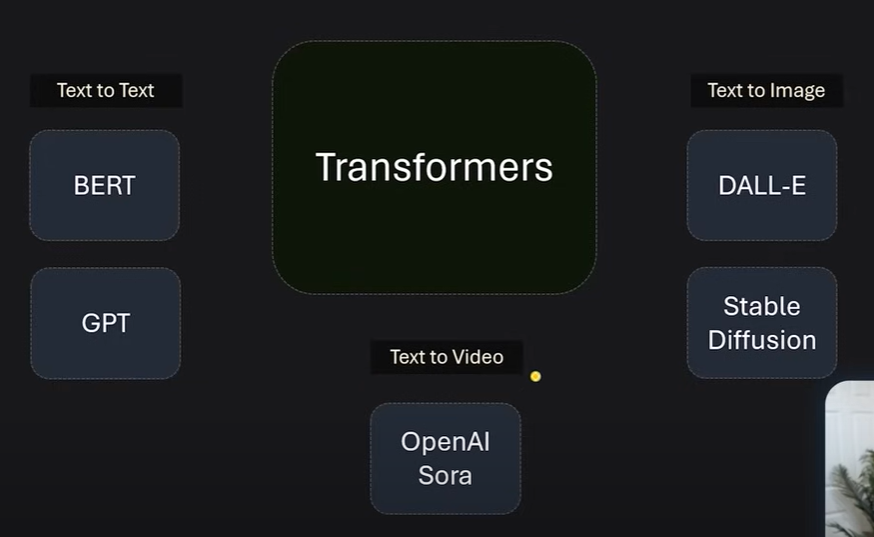

Transformers are generative models.

Transformers can be of many types.

LLM

A Large Language Model (LLM) is a type of artificial intelligence (AI) that uses machine learning, specifically deep learning, to understand, interpret, and generate human language

llms use reinforcement learning to enhance its responses(ChatGPT from Open AI).It does not have subjective experience,emotions or consiousnes as we humans have.They give responses based on the trained data.

Embedding

These are numeric representation of text in form of a vector such that it can capture the meaning of the text within it.

Embeddings are based on the context. Example:- “What is the Apple revenue?” and “What is the calories in 1 Apple”. Both sentences would be embedded differently to extract the meaning of the word “Apple” in each sentence".

Vector Database

Subscribe to my newsletter

Read articles from Khushi Singh directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by