The Psychology Behind Your Cybersecurity

Yarelys Rivera

Yarelys Rivera

You’ve probably heard —or read— variations of the saying, ‘A chain is only as strong as its weakest link.’ While its origins may be uncertain, this is especially true in cybersecurity. Humans are often considered exactly that, a highly coercible target, and hackers know this well… that’s why they are actively trying to trick us.

As someone with a Master’s in Social Community Psychology transitioning into cybersecurity, I’ve found that understanding group dynamics and human behavior is a great complement for analyzing threats and cyber defense. My experience as a program manager, where I oversaw the complexities of 24/7 residential shelters, has provided me with a unique lens for understanding human behavior in high-stakes situations, which I find particularly relevant to cybersecurity. During my time in the SANS Cyber Immersion Academy, where I took courses like SEC 401 and SEC 504, I found so many parallels between psychology and cybersecurity. For example, these courses’ emphasis on baselines mirrored my work in 24/7 shelters, where anticipating deviations in routines was key to averting crises or, at the very least, regaining control of situations that could impact staff and clients.

Cybersecurity requires diverse skills, and I believe, understanding human behavior is among the most critical. In this article, I’ll explore how integrating psychology, from cognitive biases to stress resilience, with insights from threat intelligence can help anticipate, identify, and mitigate threats. This is not about human error, it is about transforming the so-called ‘weakest link’ into a stronger defense.

Understanding Baselines: Normal vs. Abnormal

In psychology, establishing behavioral baselines is essential for identifying deviations that may indicate underlying issues. The same applies to cybersecurity, for example, without knowing normal network activity, detecting anomalies becomes difficult. As Professor Bryan Simon emphasizes in the SANS SEC 401 course: ‘Know thy systems!’ — a principle that applies equally to understanding human behavior and network traffic.

Cybersecurity experts must understand what constitutes normal network activity to identify potential intrusions. This parallels behavioral assessments, where normal behavior must be established to spot anomalies. As a program manager overseeing two 24/7 residential shelters, I saw firsthand how ‘baselines’ operate in high-risk human environments. While humans aren’t identical to systems, the principle holds: knowing clients’ routines, staffing patterns, and facility dynamics allows us to detect risks early, whether a sudden shift in a client’s behavior or an unexpected staff absence. Just as a shelter’s stability depends on recognizing deviations from the norm, cybersecurity relies on spotting anomalies like unusual login times or data transfers in network traffic.

By continuously monitoring and analyzing network patterns, cybersecurity professionals can detect irregularities, much like psychologists or mental health therapists observe and interpret behavioral shifts. This detection is enhanced by threat intelligence, which reveals common adversary tactics, techniques, and procedures (TTPs) and their preferred methods of manipulation, for instance, using urgent language. Knowing what specific deviations attackers often cause allows defenders to align their baseline monitoring with likely malicious activity, transforming human observation into a security advantage. Due to my experience, I see threat intel as those client notes or past observations that guide behavioral professionals to anticipate future behavior and potential triggers, so threat intel provides cybersecurity experts with a record of adversary tactics and motivations, allowing for a more informed and proactive defense.

Recommended Reading:

- "Intrusion Detection" by Rebecca Gurley Bace and Peter Mell: This book goes deep into methods for setting up and maintaining network baselines for security purposes.

Social Engineering: Exploiting Trust, Authority, and Habituation

While understanding normal system and network behavior is crucial, attackers also exploit our understanding of 'normal' human interactions and tendencies to manipulate us through social engineering.

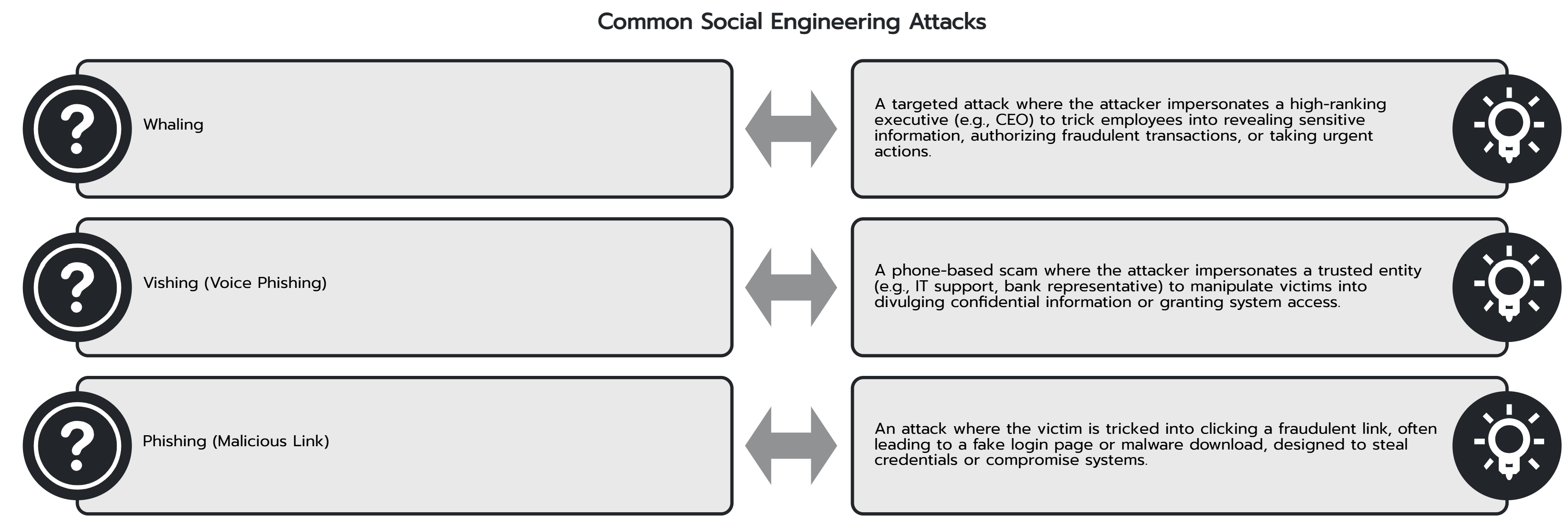

Social engineering attacks exploit psychological principles to coerce us into, for example, revealing confidential information or doing something on their behalf. Knowledge of psychological tactics, such as trust, authority, and social proof, can help cybersecurity professionals better defend against these attacks. Let’s see some case study examples:

Seagate Technology (2016)

In 2016, Seagate Technology fell victim to a sophisticated phishing attack. The attackers employed a tactic known as "whaling," where they impersonated Seagate CEO Stephen Luczo in an email to the HR department. The email requested W-2 forms and personally identifiable information (PII) of all employees. Believing it was legitimate, the HR employee complied. Just like that, they went from being a regular staff doing their job to becoming an unintentional insider threat as they provided sensitive information to threat actors. A great reminder about how even well-meaning folks can become vectors for attacks.

Democratic National Committee (DNC, 2016)

APT29 (Cozy Bear), a Russian state-sponsored group, breached the Democratic National Committee in 2016 using spear-phishing emails disguised as legitimate Google security alerts. Staff were tricked into entering credentials on fake login pages—a tactic known as "credential harvesting." This attack exploited the affect heuristic: trusting a familiar brand like Google and overlooking subtle anomalies like mismatched URLs. Also, authority bias: assumes legitimacy due to Google’s credibility.

Similar risks arise from habituation—a psychological phenomenon where users prioritize convenience over caution due to repeated exposure to ‘normal’ prompts. For example:

Clicking "OK" on Microsoft’s User Account Control (UAC) prompts without reading them.

Granting data access to services or apps via, for example, Google sign-in without reviewing permissions.

Attackers exploit this reduced scrutiny by mimicking trusted interfaces, knowing users will likely bypass the defenses in place.

MGM Resorts Hack (2023)

In 2023, MGM Resorts faced a ransomware attack by Scattered Spider. The attack used a common social engineering technique, vishing (phishing over the phone), to impersonate an employee and gain admin privileges.

How? By impersonating an MGM Resorts employee in a call to the IT help desk, the attackers obtained administrator privileges to MGM's Okta and Azure tenant environments. As a result of the attack, MGM experienced disruptions to essential services, including reservation systems and digital room keys.

These incidents demonstrate the critical need for robust cybersecurity awareness and training programs to mitigate social engineering threats effectively. This training becomes far more effective when informed by threat intelligence. Understanding how specific adversaries craft their campaigns—knowing their preferred social engineering playbooks—allows organizations to tailor awareness programs. Instead of generic warnings, training can focus on countering the actual tactics employees are likely to face, empowering them to actively protect themselves and the organization. By teaching employees what to look for, we create a much more resilient defense, a human-centric approach focused on increasing their awareness and providing specific skills to spot these attacks.

For a deeper dive into the psychological principles behind these attacks, see the Cognitive Biases section below.

Practical Tips:

Train everyone regularly, or educate yourself about the methods or tricks of social engineering and how to recognize them! It’s getting thicker by the minute, given Gen AI and all the tools available.

Use what is known about real attacker tactics (that's where threat intelligence comes in!) to create simulated phishing campaigns.

Foster a culture of skepticism and verification, encouraging employees to double-check unexpected requests for sensitive information.

Something to read:

- "Social Engineering: The Art of Human Hacking" by Christopher Hadnagy: This book explores the psychological tactics used in social engineering and how to counteract them.

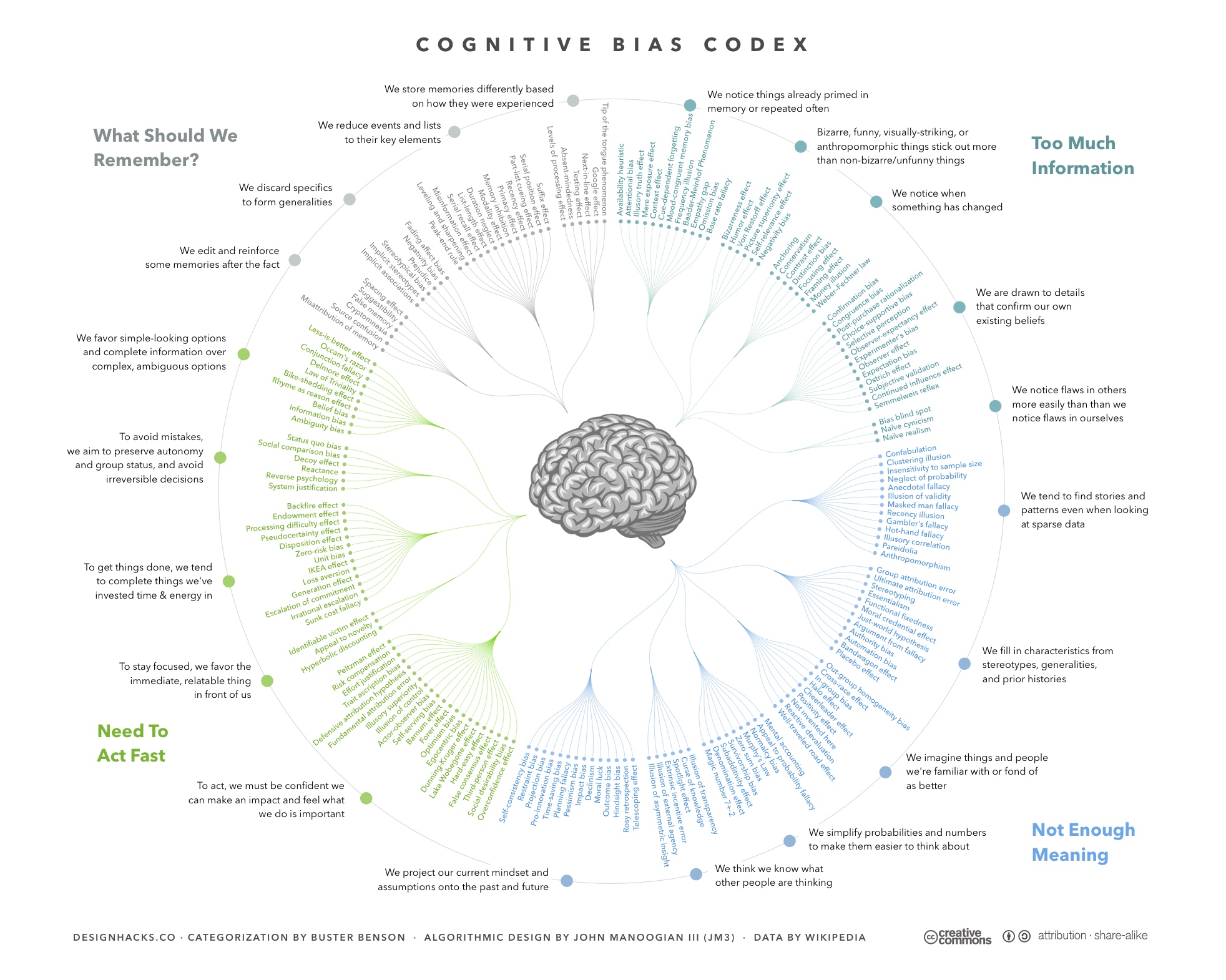

Cognitive Biases and Decision-Making

Cognitive biases can cloud judgment in any field, but their impact on cybersecurity decision-making is particularly critical, as they influence everything from threat analysis to incident response.

Let’s explore some cognitive biases that can impact cyber defenses:

Authority Bias: This is the tendency to comply with requests from perceived authority figures without verification. Psychologists like Stanley Milgram demonstrated how authority figures can override personal judgment—a principle that attackers exploit in social engineering. Like in the MGM breach, attackers pretended to be employees calling IT support. Similarly, in the Seagate attack, HR staff complied with a fake email from the “CEO” requesting sensitive employee data.

Anchoring: This bias occurs when we rely too heavily on the first piece of information we find. For instance, if a Chief Information Security Officer (CISO) prioritizes a specific cyber threat, other team members may focus solely on that threat, neglecting other potential risks.

Availability Heuristic: Security teams often assess risks based on recent experiences or industry trends. This can result in overlooking less obvious threats that do not fit the familiar pattern. For example, while security teams might proactively prioritize defenses against the threat of ransomware attacks, this could also lead to overlooking other significant vulnerabilities or attack vectors that aren't trending yet.

Affect Heuristic: This mental shortcut is heavily influenced by emotions. For example, if security staff feel confident about a situation, they may perceive it as low risk and fail to investigate thoroughly, potentially overlooking significant threats.

Optimism Bias: Many people, including security teams, believe that because they have security measures in place, they are immune to attacks. This “it won’t happen to us” mentality can lead to complacency and underpreparedness. Threat intelligence directly counters this bias by presenting concrete evidence of real-world risks, such as sector-specific targeting trends. This helps teams to address likely attack vectors based on evidence, rather than relying on potentially dangerous assumptions. For more real-world examples, see this article about optimism bias in Cybersecurity.

Decision Fatigue: Continuous decision-making is exhausting for anyone. In Security Operations Centers (SOC), for instance, one of the risks is that overwhelmed or fatigued security staff may ignore alerts from security tools, potentially leading to missed critical threats.

Practical Tips:

Build a culture where we all feel comfortable asking questions and reviewing each other's work. It's about having each other's backs and being open to different ways of seeing things – challenging assumptions is key!

Use structured techniques, like this one called "Analysis of Competing Hypotheses” (p.95). It's a way to consider all the possible explanations instead of sticking with the first one that makes sense. This encourages a thorough analysis.

Keep learning and be ready to adapt when new information pops up. Let's not get too stuck on initial thoughts! Staying curious and open to new evidence is crucial.

Leverage information about cognitive biases to improve decision-making. For example, peer reviews help us catch our blind spots.

Something to read:

"Thinking, Fast and Slow" by Daniel Kahneman: Kahneman presents various cognitive biases and their impacts on decision-making, offering valuable insights for anyone, including cybersecurity folks!

“Psychology of Intelligence Analysis” by Richard J Heuer, Jr: The author explores the psychological factors that can affect intelligence analysis, providing insights for cyber threat intelligence professionals looking to improve their analytical skills and objectivity.

Stress Management and Resilience

The high-pressure nature of cybersecurity work can lead to burnout and decreased performance. Applying psychological principles of stress management and resilience can help professionals maintain their mental health and perform effectively under pressure.

As a program manager in a 24/7 residential facility, I witnessed firsthand how chronic stress affects performance. Social workers and managers faced relentless demands: staffing shortages, traumatic cases, overnight emergencies, and the emotional toll of clients’ crises; therefore, Burnout wasn’t just a buzzword. Based on my research, cybersecurity incident responders face similar challenges: high-stakes alerts, sleepless on-call rotations, and the pressure to mitigate threats in real-time.

My experience taught me that resilience isn’t just individual—it’s systemic. Just as in social services, we implemented team discussions after a crisis and mandatory downtime for staff to process high-stress events, cybersecurity teams need similar support. Without it, even the most skilled professional risks becoming the next ‘insider threat’—not from malice, but from exhaustion.

Just as shelters use debriefings as part of a broader strategy to build resilience, cybersecurity teams can implement structured support systems to maintain vigilance and prevent burnout.

Practical Tips:

Implement a range of stress management resources, such as regular workshops on coping strategies, physical wellness activities, and provide access to mental health support services.

Encourage a balanced work-life environment to prevent burnout.

Develop a support system within the team to share the workload and provide emotional support during high-pressure situations.

Build peer mentoring systems—a tactic rooted in community psychology—to distribute workloads and combat decision fatigue.

Something to read:

- "The Resilient Practitioner: Burnout Prevention and Self-Care Strategies for Counselors, Therapists, Teachers, and Health Professionals" by Thomas M. Skovholt and Michelle Trotter-Mathison: Although focused on health professionals, the principles of resilience and self-care are highly relevant to cybersecurity.

Conclusion

Ultimately, recognizing the impact of human behavior, informed by psychological principles, is essential for building a truly resilient cybersecurity posture that can complement the defenses. My journey, from social-community psychology and managing high-risk human environments (like shelters) to my cybersecurity training and certifications, has solidified the undeniable parallels between understanding human dynamics in crisis and effectively responding to cyber incidents, a key insight reinforced by my studies and research. I hope that this integrated perspective inspires a more human-centered approach to security, leading to stronger and more adaptable defenses.

Security isn’t just about technology; it’s about people. A truly resilient cybersecurity strategy doesn’t just defend against threats—it anticipates them by understanding human behavior. In this effort, threat intelligence acts as a vital bridge, providing defenders with insights into how adversaries are actively exploiting human psychology, allowing us to anticipate and proactively strengthen our defenses, transforming the 'weakest link' into a powerful asset.

So, the next time you think about cybersecurity, ask yourself: Are you securing just the system or the people who interact with it every day?

Subscribe to my newsletter

Read articles from Yarelys Rivera directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by

Yarelys Rivera

Yarelys Rivera

Welcome to CyberYara! I created this space to share insights on cybersecurity, programming, and the latest in tech. With a background in cybersecurity, leadership, and technology, I enjoy breaking down complex topics and making them accessible to a wider audience. My journey into cybersecurity began when I witnessed firsthand how frequent phishing attacks disrupted an organization I worked for. That experience led me to dive deep into security practices, earning certifications like GFACT, GSEC, and GCIH after intensive training at SANS Cyber Academy. I also completed the Google Cybersecurity Certificate and hold a Scrum Master Certification (PSM I). Beyond cybersecurity, I enjoy learning and sharpening my technical skills in Python, SQL, HTML & CSS, and AI. I also have extensive experience in operations and leadership, having managed diverse teams and ensured compliance across multiple projects. My background in journalism and psychology gives me a unique perspective on tech—how we communicate it, how we secure it, and how it impacts people. On this blog, you’ll find practical cybersecurity tips, programming tutorials, discussions on tech trends, and more. Whether you’re a beginner or a seasoned professional, I hope CyberYara sparks curiosity, learning, and meaningful conversations. Thanks for stopping by!