How CycleGAN is Revolutionizing AI-Powered Image Transformation

Omkar Thorve

Omkar ThorveTable of contents

- Understanding Image-to-Image Translation

- The Architecture of CycleGAN

- Real-World Applications of CycleGAN

- Final Thoughts on CycleGAN

- Frequently Asked Questions (FAQs)

- Q1: What makes CycleGAN different from traditional GANs?

- Q2: Can CycleGAN work with any two image domains?

- Q3: How does CycleGAN handle the problem of mode collapse?

- Q4: What are the computational requirements for training a CycleGAN?

- Q5: How does CycleGAN compare to newer image translation models?

- Q6: Can CycleGAN be used for video transformation?

- Q7: What ethical considerations should be kept in mind when using CycleGAN?

- Q8: How can I implement CycleGAN for my own projects?

In recent years, Generative Adversarial Networks (GANs) have emerged as powerful tools for image generation and transformation. Among these innovative architectures, CycleGAN stands out as a revolutionary approach that has significantly advanced the field of AI-powered image transformation. This article explores how CycleGAN is changing the landscape of image processing, its unique architecture, and its real-world applications.

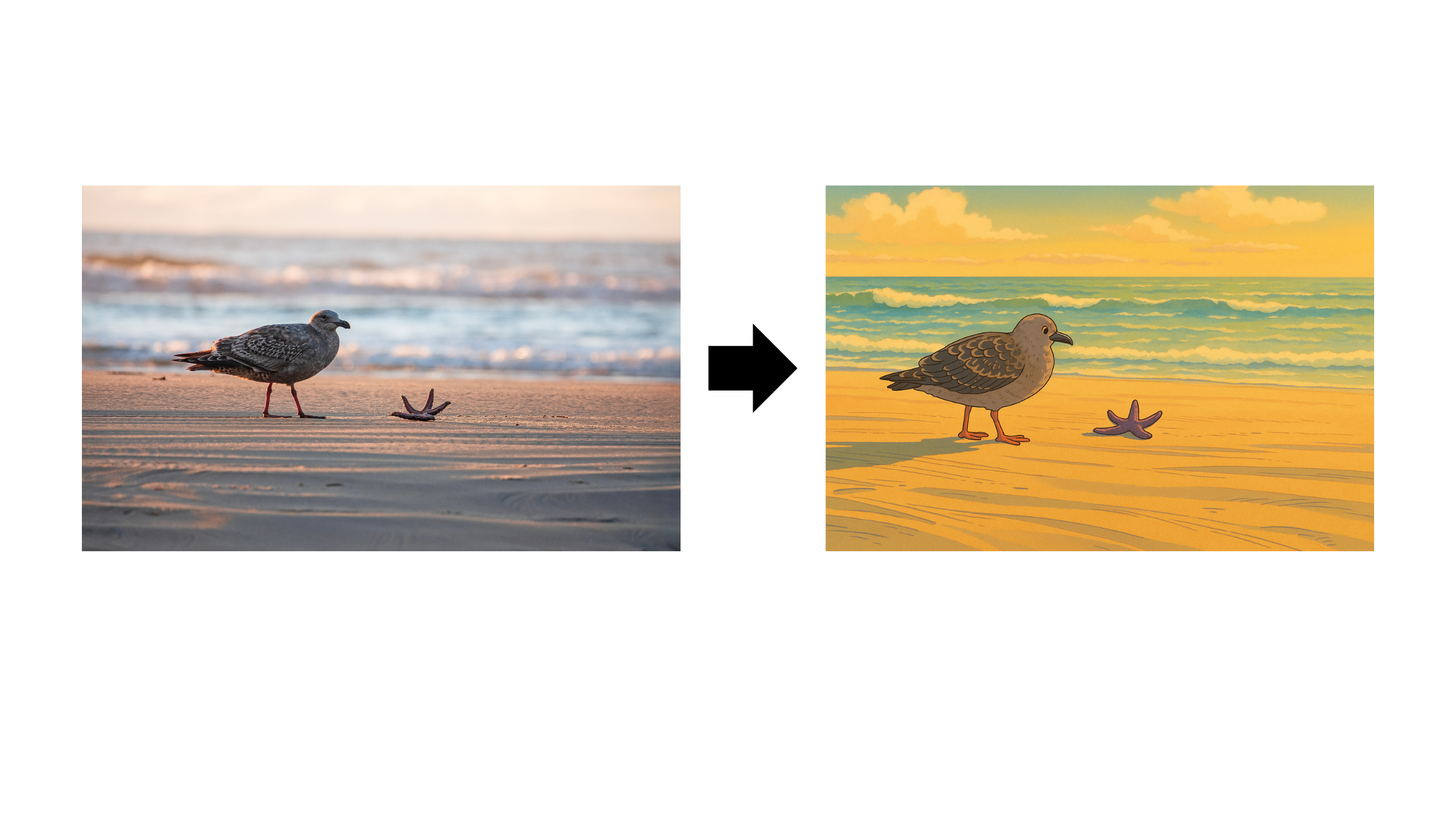

Understanding Image-to-Image Translation

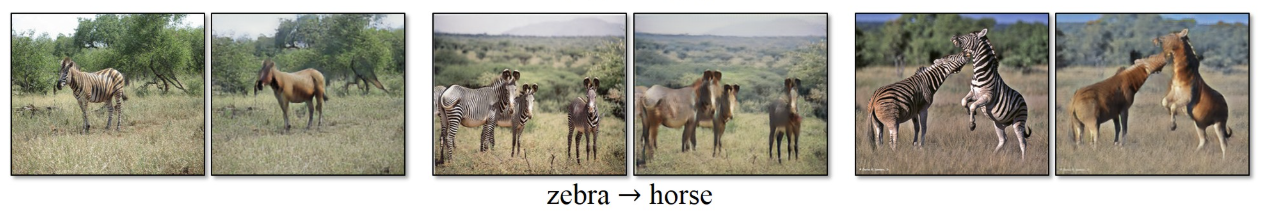

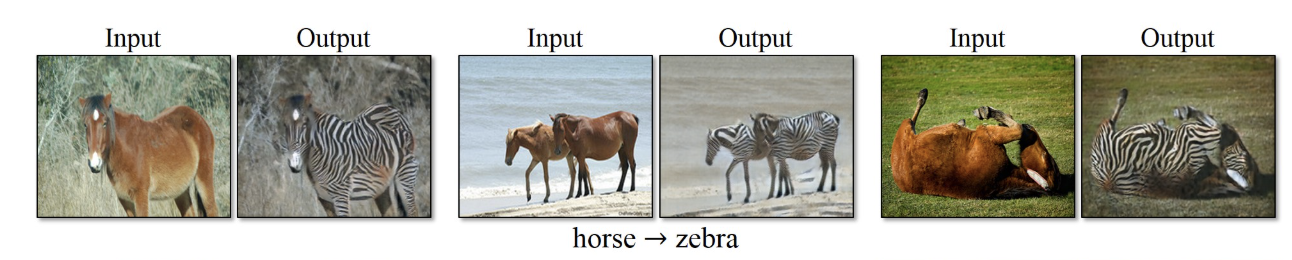

Image-to-image translation refers to the process of converting an image from one domain to another while preserving its content but altering its style. For example, transforming a Zebra into a horse, or converting a horse into a zebra image.

Image to Image Translation

Pix2Pix GAN: Paired Image-to-Image Translation

Before diving into CycleGAN, it's important to understand its predecessor, Pix2Pix. Developed as one of the first successful frameworks for image-to-image translation, Pix2Pix relies on paired data for training. This means that for each input image, there must be a corresponding target image that represents the desired output.

Pix2Pix works exceptionally well when such paired datasets are available, producing high-quality transformations by directly learning the mapping from input to output images. The network learns to generate images that not only look realistic but also correspond to the input images in a meaningful way.

The Difference Between Paired and Unpaired Image-to-Image Translation

The fundamental difference between paired and unpaired image-to-image translation lies in the training data requirements:

Paired and Unpaired Data Source

Paired Translation (Pix2Pix):

Requires corresponding images from both domains (X → Y)

Example: Satellite photos paired with corresponding map views

Advantage: More precise transformations

Limitation: Acquiring paired datasets is often difficult, expensive, or impossible

Unpaired Translation (CycleGAN):

Works with two separate sets of images from domains X and Y, without one-to-one correspondences

Example: Collection of horse images and collection of zebra images (not matched pairs)

Advantage: Significantly more flexible, as paired data is rarely available

Challenge: Ensuring that generated images maintain the content of the input while adopting the style of the target domain

This difference is crucial because in many real-world scenarios, paired training data is simply not available. According to Engineering Nordeus, while CycleGAN may not always achieve the same level of precision as Pix2Pix in paired scenarios, its ability to function without paired data opens up a vast range of previously impossible applications.

The Architecture of CycleGAN

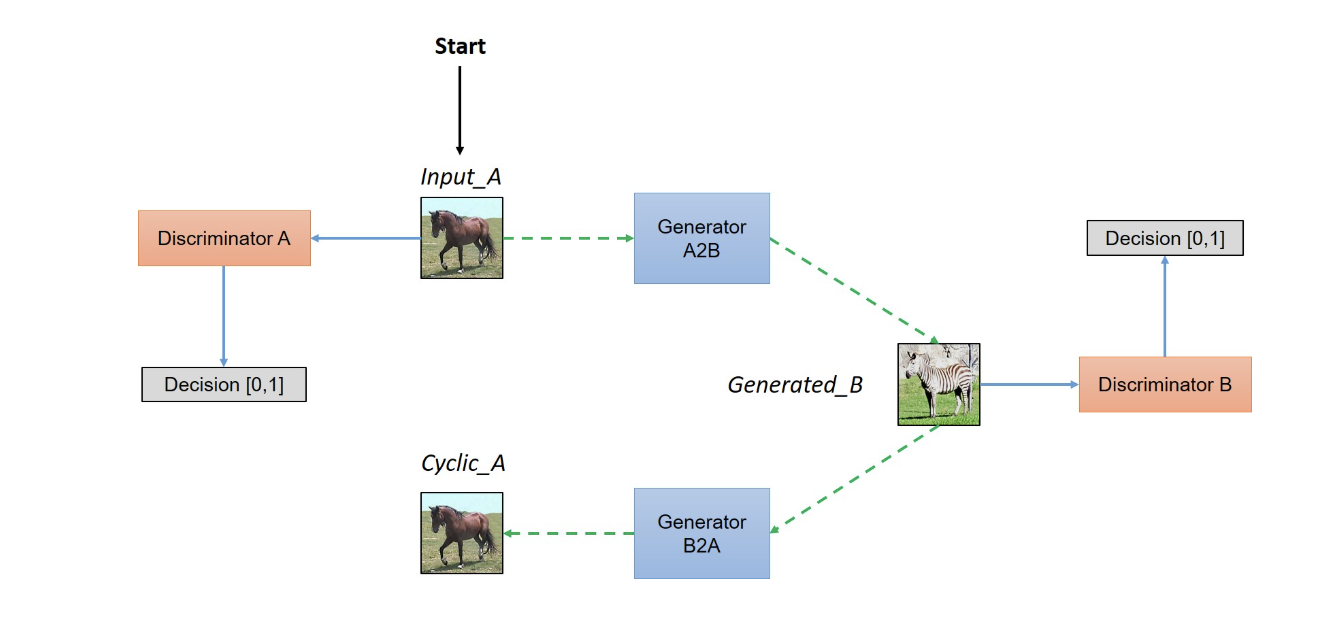

CycleGAN, introduced by Jun-Yan Zhu and colleagues, addresses the challenge of unpaired image-to-image translation through an innovative architecture consisting of several key components.

Architecture of CycleGAN

Key Components of CycleGAN

Dual Generators:

Generator G: Translates images from domain X to domain Y

Generator F: Translates images from domain Y to domain X

Dual Discriminators:

Discriminator Dx: Distinguishes between real images from domain X and translated images F(Y)

Discriminator Dy: Distinguishes between real images from domain Y and translated images G(X)

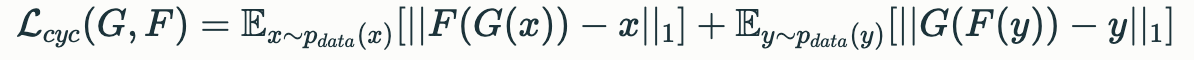

Cycle Consistency Loss:

Forward cycle consistency: For x ∈ X, F(G(x)) ≈ x

Backward cycle consistency: For y ∈ Y, G(F(y)) ≈ y

The architecture is designed as two "cycles" that work together to ensure the translation preserves the essential content while changing the style appropriately.

The Role of Cycle Consistency Loss in CycleGAN

The cycle consistency loss is perhaps the most innovative aspect of CycleGAN's architecture and is central to its success in unpaired image-to-image translation. This loss function enforces a simple principle: if we translate an image from domain X to domain Y and then back to domain X, we should arrive close to the original image.

Mathematically, this is expressed as:

Cycle-Consistency Loss (Source)

The importance of cycle consistency loss cannot be overstated:

Preserves Content: Without paired data to guide the translation, the cycle consistency loss ensures that the content and structure of the original image are maintained.

Prevents Mode Collapse: It helps prevent the generators from mapping all input images to the same output image, a common problem in GAN training.

Enables Learning Without Direct Supervision: As noted on the official CycleGAN project page, this approach allows learning to translate between domains without any paired examples.

Constrains the Space of Possible Mappings: There are infinitely many mappings that could transform one domain to another; cycle consistency narrows these down to mappings that preserve the underlying structure.

According to researchers at Haiku Tech Center, the cycle consistency loss is what enables CycleGAN to learn meaningful mappings without paired data, effectively solving what would otherwise be an ill-posed problem.

Real-World Applications of CycleGAN

CycleGAN's ability to perform unpaired image-to-image translation has opened up numerous practical applications across various fields:

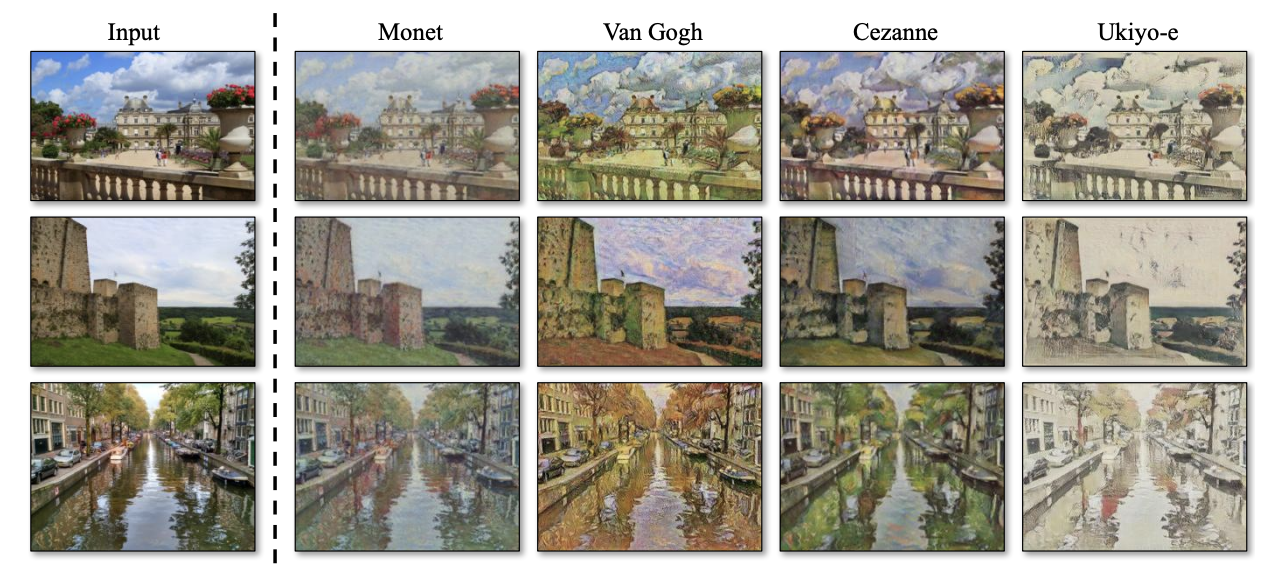

1. Style Transfer and Art Generation

Collection style transfer Source

One of the most visually striking applications of CycleGAN is in artistic style transfer:

Photo-to-Painting Conversion: CycleGAN can transform ordinary photographs into images that mimic the style of famous artists like Monet, Van Gogh, or Cézanne.

Season Transfer: Converting summer scenes to winter scenes and vice versa.

Time-of-Day Transformation: Turning daytime photos into nighttime scenes without paired data.

This capability has been embraced by digital artists, content creators, and even photography applications that offer artistic filters based on similar technology.

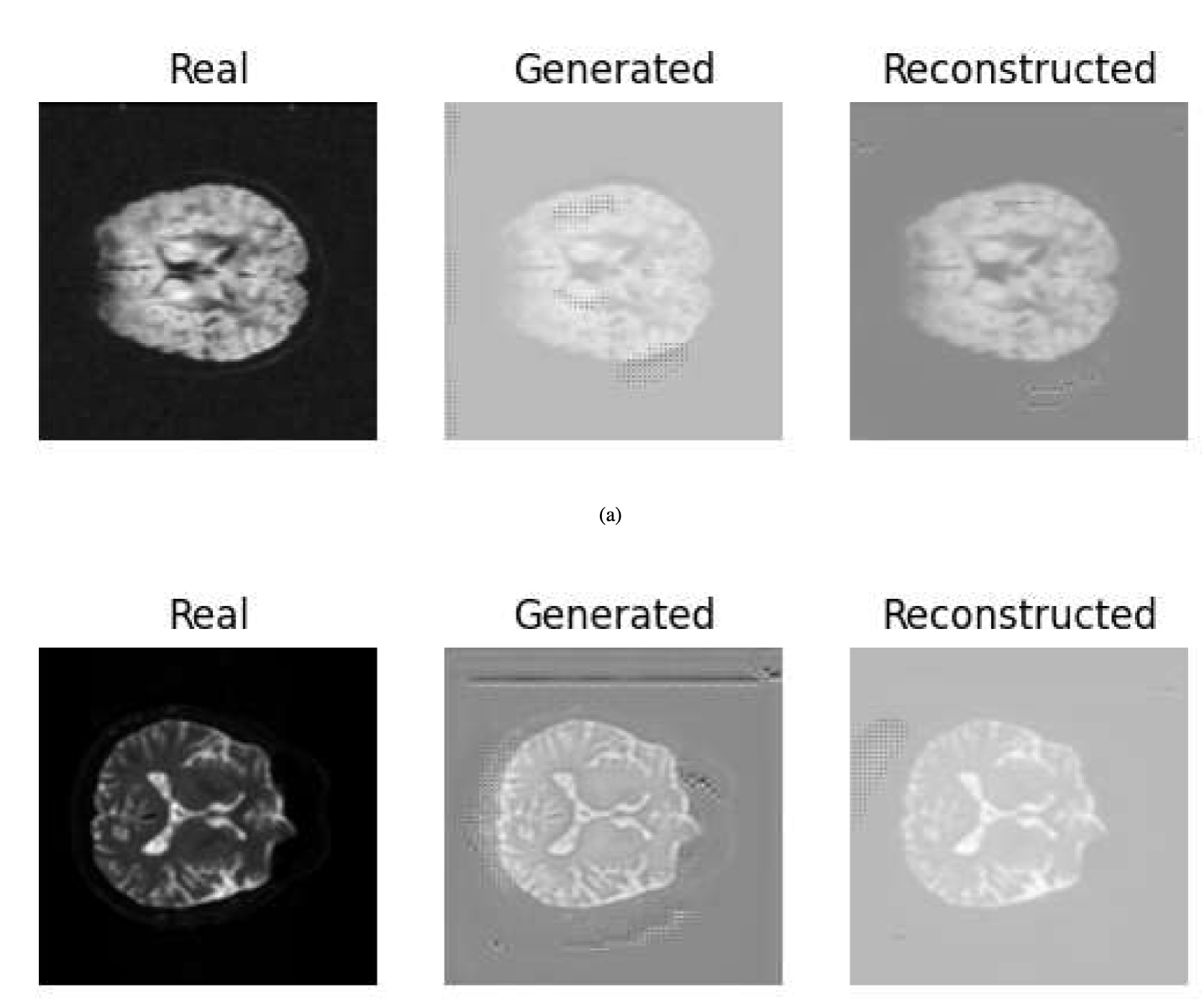

2. Medical Imaging Enhancement and Cross-Modality Synthesis

CycleGAN Generated and Reconstructed Images (Source)

CycleGAN has made significant inroads in the medical field:

CT to MRI Translation: Converting Computed Tomography (CT) images to Magnetic Resonance Imaging (MRI) images or vice versa, allowing doctors to leverage information from both modalities while only acquiring one type of scan.

Image Enhancement: Improving the quality of medical images with low resolution or artifacts.

Data Augmentation: Generating synthetic medical images for training other AI systems, helping to address the persistent problem of limited medical datasets.

The unpaired nature of CycleGAN is particularly valuable in medical imaging where paired datasets across different imaging modalities are extremely rare, expensive to produce, and often impossible to obtain for ethical reasons.

Final Thoughts on CycleGAN

CycleGAN represents a significant breakthrough in the field of image-to-image translation by eliminating the need for paired training data. This advancement has democratized access to sophisticated image transformation techniques and expanded the range of possible applications.

The architecture's success can be attributed to its elegant solution to the unpaired translation problem through cycle consistency loss, which ensures that transformations preserve the essential content while adopting the style of the target domain.

As the field continues to evolve, we're seeing new variants and improvements to the CycleGAN architecture. For instance, as mentioned by researchers at the Haiku Tech Center, approaches like Contrastive Unpaired Translation (CUT) build upon CycleGAN's foundation to deliver even better results with faster training times.

The impact of CycleGAN extends beyond technical achievement—it represents a fundamental shift in how we think about image transformation. Rather than requiring carefully curated paired datasets, CycleGAN demonstrates that meaningful transformations can be learned from collections of images that share certain characteristics, opening up new possibilities for creative expression, scientific research, and practical applications.

As computational resources become more accessible and the algorithms continue to improve, we can expect to see ever more sophisticated and diverse applications of CycleGAN and its descendants in fields ranging from entertainment and design to healthcare and scientific visualization.

Frequently Asked Questions (FAQs)

Q1: What makes CycleGAN different from traditional GANs?

A: Unlike traditional GANs that focus on generating images from random noise, CycleGAN specifically addresses the problem of unpaired image-to-image translation. Its unique architecture incorporates dual generators, dual discriminators, and most importantly, cycle consistency loss that allows it to learn mappings between domains without paired examples. This makes CycleGAN particularly versatile for real-world applications where paired data is scarce or unavailable.

Q2: Can CycleGAN work with any two image domains?

A: While CycleGAN is remarkably versatile, it does have limitations. It works best when there is a clear structural relationship between the two domains. For instance, translating between horses and zebras works well because both share similar body structures. However, transformations that require significant structural changes (like turning an apple into a car) are beyond its capabilities. The domains should share some underlying content or structure for effective translation.

Q3: How does CycleGAN handle the problem of mode collapse?

A: Mode collapse is a common issue in GANs where the generator produces limited varieties of outputs. CycleGAN mitigates this through its cycle consistency loss, which ensures that translating an image to another domain and back must yield something close to the original image. This constraint prevents the generator from mapping multiple inputs to the same output, helping maintain diversity in the generated images.

Q4: What are the computational requirements for training a CycleGAN?

A: Training a CycleGAN is computationally intensive. Typically, it requires a high-end GPU with at least 11-12GB of memory for standard implementations. Training times can range from several days to weeks depending on the dataset size, image resolution, and hardware specifications. However, once trained, inference (generating new translations) is relatively fast and can be performed on less powerful hardware.

Q5: How does CycleGAN compare to newer image translation models?

A: While CycleGAN was groundbreaking when introduced in 2017, newer models have built upon its foundation. Models like UNIT, MUNIT, FUNIT, and CUT offer improvements in various aspects such as multi-modal outputs (generating multiple possible translations for one input), faster training times, or better quality. However, CycleGAN remains relevant due to its relatively simple yet effective architecture and continues to be a strong baseline for unpaired image translation tasks.

Q6: Can CycleGAN be used for video transformation?

A: While CycleGAN was primarily designed for still images, researchers have extended its principles to video transformation by applying temporal consistency constraints. Such models ensure that translations are consistent across consecutive frames. However, video transformation requires significantly more computational resources and careful handling of temporal coherence to avoid flickering or inconsistent translations between frames.

Q7: What ethical considerations should be kept in mind when using CycleGAN?

A: As with many AI image manipulation technologies, CycleGAN raises ethical concerns regarding potential misuse for creating misleading or deceptive content. Applications in deepfakes, misrepresentation of places or events, or unauthorized style transfer from artists' work all present ethical challenges. Users should consider consent, attribution, and potential harm when applying these technologies, particularly in contexts involving human subjects or copyrighted artistic styles.

Q8: How can I implement CycleGAN for my own projects?

A: Several open-source implementations of CycleGAN are available, with the official PyTorch implementation by the original authors being among the most popular. To get started, you'll need two sets of images representing your source and target domains, along with a development environment with deep learning frameworks and GPU support. Pre-trained models for common transformation tasks are also available, which can be fine-tuned for specific applications with less computational resources than training from scratch.

Subscribe to my newsletter

Read articles from Omkar Thorve directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by

Omkar Thorve

Omkar Thorve

I build intelligent systems that solve real-world problems. With expertise in deep learning, computer vision, and NLP, I'm passionate about pushing the boundaries of AI research and innovation.