ChatGPT: Evolution , Technical features and App making

Sulabh Sharma

Sulabh Sharma

ChatGPT is developed by OpenAI. It is an AI chatbot that uses natural language processing to generate human-like conversational responses. It can answer questions, write essays, generate code and more. The "GPT" in ChatGPT stands for "Generative Pre-trained Transformer" indicating its architecture and training method. ChatGPT uses deep learning, a subset of machine learning to produce humanlike text through transformer neural networks. The transformer predicts text including the next word sentence or paragraph based on its training data's typical sequence. Training begins with generic data then moves to more tailored data for a specific task. ChatGPT was trained with online text to learn the human language and then it used transcripts to learn the basics of conversations.

Evolution of ChatGPT

GPT-1: The journey began with the first Generative Pre-trained Transformer (GPT) model introduced by OpenAI in 2018. GPT-1 was a breakthrough in Natural Language Processing (NLP) utilizing 117 million parameters to generate human-like text based on the context provided. It marked a significant step forward in machine learning demonstrating the potential of pre-trained transformers.

GPT-2: Launched in 2019, GPT-2 built upon the foundation laid by GPT-1 expanding the model to 1.5 billion parameters. This massive increase in scale enabled GPT-2 to generate more coherent and contextually relevant text making it a more powerful tool for a variety of NLP tasks. The revolution of GPT has begun.

GPT-3: Released in June 2020, GPT-3 was a monumental leap forward boasting 175 billion parameters. This vast increase in model size brought unprecedented capabilities in natural language understanding and generation.

GPT-3.5: Generative Pre-trained Transformer 3.5 (GPT-3.5) is a sub class of GPT-3 Models created by OpenAI in 2022. On March 15th 2022, OpenAI made available new versions of GPT-3 and Codex in its API with edit and insert capabilities under the names "text-davinci-002" and "code-davinci-002"

GPT-4: It is a multimodal large language model trained and created by OpenAI and the fourth in its series of GPT foundation models. It was launched on March 14th 2023.

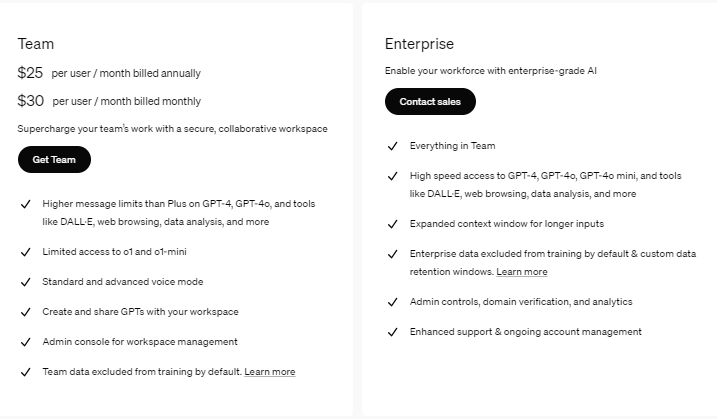

Basic Technical features of ChatGPT models

Model Architecture

Definition: The design of the AI model that determines how it processes and generates text.

Example: Think of it like the blueprint for building a robot. A "Transformer Decoder" is like a design that helps the robot predict and write sentences based on patterns it learned.

Parameters

Definition: The internal "settings" the model uses to make decisions and generate responses. More parameters mean better decision-making.

Example: A model with 175 billion parameters (like GPT-3) is like a chef with 175 billion recipes- it can cook almost anything you ask for.

Token Limit

Definition: The maximum amount of text the model can process in a single interaction. Tokens are chunks of words or letters.

Example: If you ask the model to summarize a 5-page document but its token limit is 2 pages it won’t be able to process the entire document.

Batch Processing

Definition: The ability to handle multiple tasks or inputs at the same time.

Example: Imagine a cashier processing 5 customers at once instead of one by one. A model with good batch processing can answer 5 different users simultaneously without delay.

Training Type

Definition: How the model is trained to understand and generate text.

Pre-trained: Trained on a large dataset before being released.

Reinforcement Learning from Human Feedback (RLHF): Further refined by humans to improve responses.

Example: Pre-trained is like a chef who learned cooking by reading books. RLHF is like a chef who improved their cooking by learning directly from customer feedback.

Few-Shot Learning

Definition: The model's ability to perform tasks after seeing only a few examples.

Example: If you show the model two examples of writing a haiku it can generate its own haikus without needing hundreds of examples.

Latency

Definition: The time it takes for the model to generate a response after receiving input.

Example: A low-latency model is like a fast typist who replies to your text immediately while a high-latency model is like a slow responder who takes minutes.

Multimodal Input

Definition: The ability to process both text and images as input.

Example: You can upload a photo of a math problem and the model (like GPT-4) can solve it by analyzing the image and generating a text response.

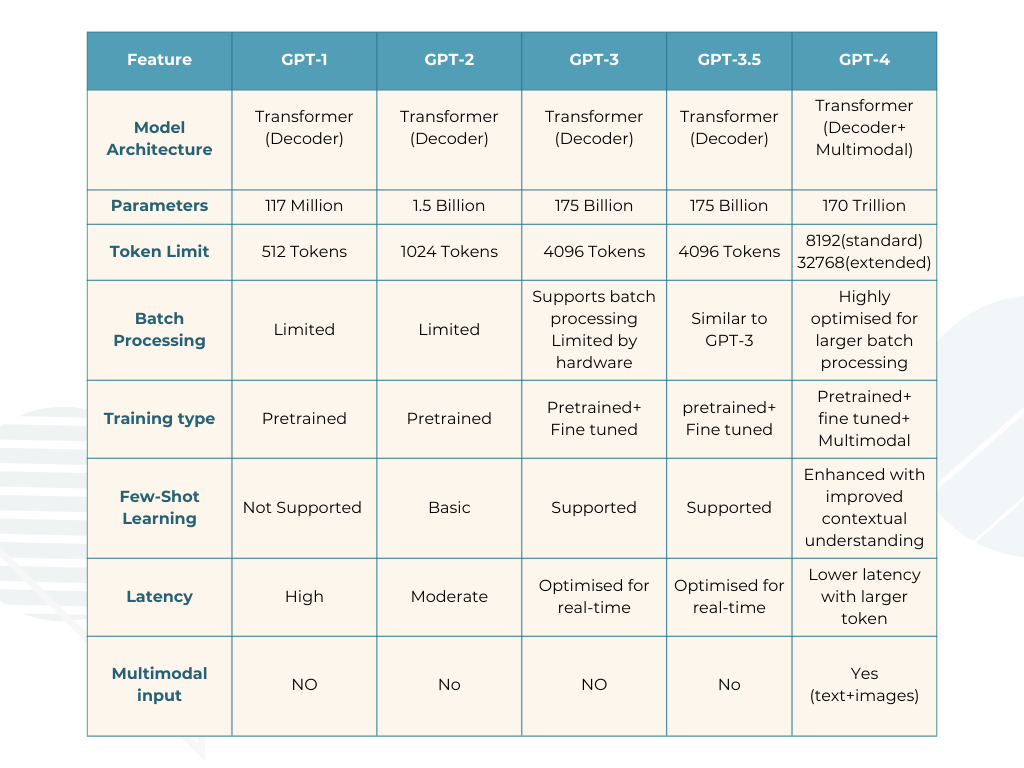

Context Retention

Definition: How well the model remembers previous parts of a conversation or input.

Example: If you’re chatting about your favorite movies a model with good context retention will remember your preferences and recommend relevant movies later in the chat.

Comparison of Key technical features of ChatGPT models

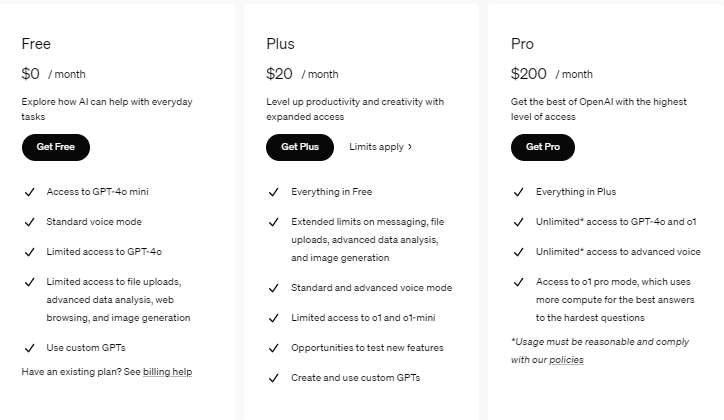

Pricing Mechanism of ChatGPT, https://openai.com/api/pricing/

Which Model Should You Choose Based on Your Budget?

1. If You're on a Tight Budget (MVP or Small-Scale App):

- Choose GPT-3: It offers great performance for basic use cases like simple content generation, question-answering and basic chatbots. It’s cost-effective and can handle small-scale applications with ease.

2. If You Need Better Performance and More Complex Conversations:

- Choose GPT-3.5: It provides improved performance over GPT-3 especially for applications that require ongoing conversations, handling complex customer support queries and larger datasets. It’s a sweet spot between price and performance.

3. If You Need Advanced Features, Multimodal Capabilities, or Large-Scale Enterprise Applications:

- Choose GPT-4: While GPT-4 is the most expensive and it offers advanced capabilities such as multimodal processing (text and images) better reasoning and longer context retention. If your app needs complex problem-solving or works with a lot of data (like long documents, images or multilingual content) GPT-4 is worth the higher investment.

Final Thoughts:

When choosing a ChatGPT model for your app development the token limits, batch processing capabilities and the level of complexity your app requires will dictate the costs and performance you can expect.

GPT-3 is the best choice for basic apps with minimal budget and simple tasks.

GPT-3.5 offers a good balance between cost and advanced conversational abilities.

GPT-4 is for high-demand applications that require advanced capabilities but it comes with a higher price tag.

Always consider your app’s specific needs user interaction volume and expected workload when evaluating the most cost-effective model for your app.

Conclusion- The journey began with GPT-1 in 2018 introducing 117 million parameters and pioneering pre-trained transformers for text generation. GPT-2 launched in 2019 scaled up to 1.5 billion parameters improving coherence and contextual relevance. GPT-3 with 175 billion parameters set a new benchmark in 2020 enabling complex tasks like coding and detailed content generation. GPT-3.5 (2022) enhanced conversational abilities and task-specific fine-tuning while GPT-4 (2023) added multimodal capabilities processing both text and images and extended context retention for advanced applications.

ChatGPT models cater to diverse needs:

GPT-3: Ideal for basic tasks and budget-friendly solutions.

GPT-3.5: Balances cost and performance for conversational and customer support apps.

GPT-4: Best for enterprise-level, multimodal tasks and applications requiring advanced reasoning.

Selecting a model depends on your budget complexity and performance needs making ChatGPT a versatile tool for various domains from chatbots to enterprise-level AI applications.

References

Subscribe to my newsletter

Read articles from Sulabh Sharma directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by