Exploring the Effect of Reinforcement Learning on Video Understanding: Insights from SEED-Bench-R1

Mike Young

Mike Young

This is a Plain English Papers summary of a research paper called Exploring the Effect of Reinforcement Learning on Video Understanding: Insights from SEED-Bench-R1. If you like these kinds of analysis, you should subscribe to the AImodels.fyi newsletter or follow me on Twitter.

Overview

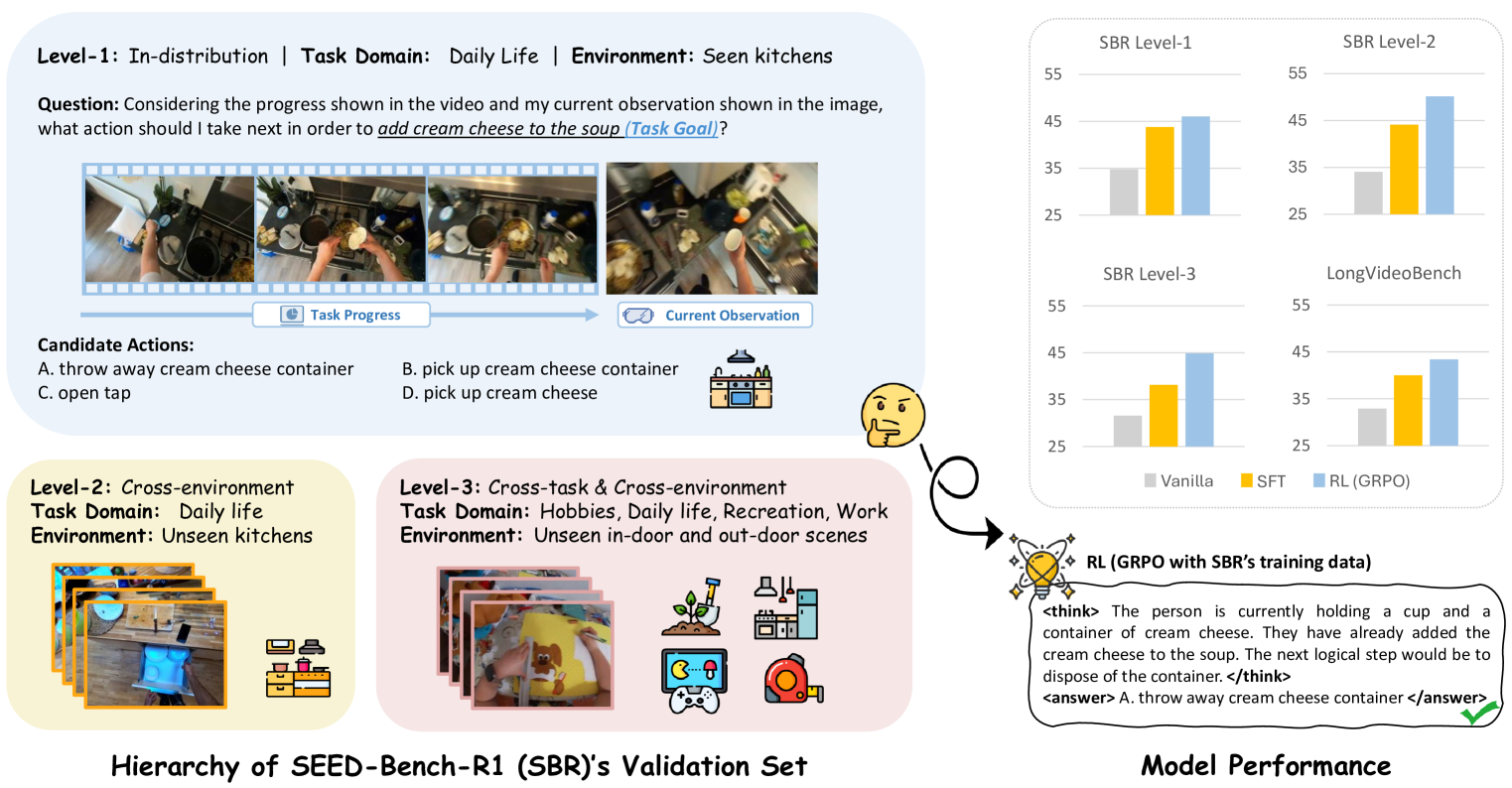

- SEED-Bench-R1 evaluates how reinforcement learning affects video understanding in MLLMs

- The study shows most RLHF-trained models underperform in video tasks compared to SFT-only versions

- Analysis reveals RL training creates misalignment between user intent and model outputs for videos

- Claude 3 Opus shows unique improvement after RL, suggesting different training approaches matter

- Video reasoning requires specific attention to temporal aspects often overlooked in RL optimization

Plain English Explanation

When we train AI systems to understand videos, we typically use a technique called reinforcement learning from human feedback (RLHF) to make them better. But this research reveals something surprising: this technique often makes video understanding worse, not better.

The researchers created a new benchmark called SEED-Bench-R1 that tests how well AI models understand videos. They tested many popular AI systems like GPT-4, Claude, and Gemini. What they discovered was unexpected - most models actually got worse at understanding videos after going through reinforcement learning training.

This happens because reinforcement learning tends to focus on making responses sound good to humans rather than ensuring the AI truly understands the video content. It's like training someone to give confident-sounding answers even when they're unsure, instead of teaching them to watch more carefully.

One exception was Claude 3 Opus, which improved after reinforcement learning. This suggests that how the reinforcement learning is implemented matters a lot, and some approaches might preserve or enhance video understanding abilities.

Key Findings

- Most large multimodal language models (MLLMs) show decreased performance on video understanding tasks after RLHF training

- GPT-4o exhibited a 9% drop in video task performance compared to its SFT-only counterpart, with similar patterns in other models

- The temporal aspects of video understanding are particularly affected by RLHF, with most models showing degradation in reasoning about event sequences

- Claude 3 Opus uniquely improved after RL training (+5.9%), suggesting its training approach better preserves video understanding capabilities

- Current RLHF implementations create a misalignment between user intent (accurate video analysis) and model outputs (confident but potentially incorrect answers)

Technical Explanation

The researchers developed SEED-Bench-R1, a comprehensive benchmark with 1,152 multiple-choice questions across various video understanding tasks. This benchmark was designed specifically to evaluate how reinforcement learning affects video understanding capabilities in multimodal language models.

They evaluated models in three categories: SFT-only models (trained with supervised fine-tuning), RLHF models (trained with reinforcement learning from human feedback), and post-RLHF models like Claude 3. The benchmark covers both spatial and temporal understanding of videos, testing abilities like relationship recognition, action recognition, and procedure understanding.

The experimental results show a clear pattern: almost all models suffer performance degradation on video tasks after reinforcement learning. For example, GPT-4o drops from 74.4% to 65.4% accuracy after RLHF. Similarly, Gemini Pro's performance decreases from 66.3% to 63.0%. The researchers found that temporal understanding tasks (those requiring reasoning about sequences of events) suffered more than spatial understanding tasks.

Claude 3 Opus was the notable exception, showing a 5.9% improvement after RL training. This suggests that Anthropic's constitutional AI approach or specific implementation details in their training methodology better maintains video understanding capabilities.

Through detailed error analysis, the researchers uncovered common failure patterns in RLHF models: they often focus on superficial video elements rather than crucial temporal information, exhibit overconfidence in incorrect answers, and produce lengthy but inaccurate explanations that sound convincing to humans.

Critical Analysis

The study reveals a significant limitation in current RLHF approaches but doesn't fully explore alternative training methodologies that might preserve video understanding. While identifying the problem is valuable, the paper could benefit from proposing specific solutions or modifications to RLHF techniques.

The benchmark itself has some limitations. With 1,152 questions, it's substantial but still may not capture the full range of video understanding capabilities. Additionally, the multiple-choice format, while enabling quantitative evaluation, may not fully represent real-world video understanding scenarios where open-ended responses are often required.

The exceptional performance of Claude 3 Opus deserves deeper investigation. The paper notes this difference but doesn't thoroughly explore what specific aspects of Anthropic's training approach might be responsible for preserving video understanding capabilities. Understanding these differences could provide valuable insights for improving RLHF training across all models.

There's also a question about the generalizability of these findings. The study focuses on multiple-choice questions, but would the same degradation pattern appear in open-ended video description tasks or more interactive video-based dialogue scenarios? This remains unexplored.

Finally, the paper doesn't deeply investigate the trade-offs involved. While RLHF may hurt video understanding, it likely improves other aspects of model performance that users value. Understanding this balance would help developers make informed decisions about model training approaches.

Conclusion

This research reveals a critical challenge in developing AI systems that truly understand video content. The common practice of using reinforcement learning to improve AI models might actually be counterproductive for video understanding, creating systems that sound confident but miss critical temporal information.

The findings suggest that developers of multimodal AI systems need to reconsider how they implement reinforcement learning when video understanding is important. Special attention must be paid to preserving temporal reasoning capabilities throughout the training process.

The exceptional performance of Claude 3 Opus offers hope that it's possible to improve both general capabilities and video understanding simultaneously with the right approach. Future research should investigate what specific aspects of Anthropic's training methodology enable this preservation of video understanding abilities.

As video content continues to dominate online media, ensuring AI systems can truly understand videos rather than just generate plausible-sounding responses about them becomes increasingly important. This study provides valuable guidance for addressing this challenge and developing more genuinely capable video understanding systems.

If you enjoyed this summary, consider subscribing to the AImodels.fyi newsletter or following me on Twitter for more AI and machine learning content.

Subscribe to my newsletter

Read articles from Mike Young directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by