Event Time vs. Other Timestamps

Community Contribution

Community ContributionTable of contents

- Key Takeaways

- Timestamp Types

- Event Time vs. Other Timestamps

- Event Timestamp in Time Series Data

- Event-Time Processing

- Choosing the Right Timestamp

- FAQ

- What is the main advantage of using event time in analytics?

- How does processing time differ from event time?

- Why do organizations standardize timestamps to UTC?

- Can event time handle late-arriving data?

- Which timestamp type suits real-time alerting best?

- What challenges arise with out-of-order events?

- How should teams choose the right timestamp type?

The main difference between event time and other timestamps lies in when they represent an event. Event time records when an event actually occurred, while other timestamps, such as processing or ingestion time, capture when systems receive or handle the event data. In financial trading, even small timestamp misalignments can lead to costly errors. For example, a trade executed a millisecond late due to incorrect event time can cause significant financial loss. Accurate timestamp selection ensures reliable analytics and timely alerts.

Consistent timestamp formats, like TIMESTAMPTZ in time-series data, support precise event tracking across industries.

| Timestamp Type | Description | Typical Use Case |

| TIMESTAMP (without time zone) | Records datetime without time zone | Local event times |

| TIMESTAMP WITH TIME ZONE | Stores datetime with time zone info | Scheduling, global transactions |

| TIMESTAMP WITH LOCAL TIME ZONE | Normalizes to database time zone | Relative event tracking |

Key Takeaways

Event time records when an event actually happens, ensuring accurate event order and reliable analytics.

Processing time marks when a system processes an event, offering low latency but less accuracy for late or out-of-order data.

Ingestion time captures when data enters the system, balancing speed and accuracy but may cause timing mismatches.

Storage time shows when data is saved, useful for compliance but not for precise event timing or real-time analysis.

Event time supports handling out-of-order and late events using watermarks, improving data completeness and correctness.

Choose event time for applications needing accuracy and compliance, like financial trading and healthcare monitoring.

Select processing time for scenarios requiring fast responses, such as system monitoring and real-time alerts.

Standardizing timestamps to UTC and using timezone-aware formats prevent errors and ensure consistent data across systems.

Timestamp Types

Event Time

Event time refers to the moment when an event actually occurs in the real world. This timestamp is embedded within the event itself, often by the data producer, such as a sensor or application. For example, a GPS sensor records the exact time a location change happens, ensuring the data stream reflects the true sequence of events. Systems like Apache Flink and Kafka Streams rely on event time to maintain the original timeline, which is essential for accurate analytics and historical data processing. Event time enables precise time-bound operations, allowing the stream to handle out-of-order events based on when they truly happened.

Sub-second precision and synchronized clocks play a crucial role in event time accuracy. Even a one-millisecond difference between two events can misrepresent their order, leading to errors in distributed systems. Most systems store event time in UTC to avoid time-zone offset issues, ensuring consistency across global data streams.

Processing Time

Processing time marks when a stream processing application handles an event. This timestamp depends on the system clock of the processing node, not the actual occurrence of the event. For instance, an analytics application may process GPS data minutes or hours after the event time, especially if there are delays in the data stream. Processing time is the simplest time concept in stream processing, but it can distort the timeline when reprocessing old data or handling late-arriving events. Stock trading algorithms and online games often use processing time to react quickly to incoming data streams, prioritizing responsiveness over historical accuracy.

Processing time offers immediate feedback for real-time analytics.

It may cause discrepancies if the data stream experiences delays or out-of-order events.

Systems using processing time must account for clock drift and offset, which can affect the reliability of event ordering.

Ingestion Time

Ingestion time records when an event enters the processing system, such as when a Kafka broker appends the event to a topic partition. This timestamp is generated by the system at the moment of ingestion, reflecting when the data stream receives the event rather than when it occurred. Communication-Based Train Control (CBTC) systems and IoT sensors often rely on ingestion-time to ensure rapid data flow and operational safety. Ingestion time approximates event time if the delay between occurrence and ingestion is minimal, but it can introduce discrepancies if network latency or buffering affects the data stream.

| Timestamp Type | Definition | Example System |

| Event Time | Time when the event actually occurred, set by the producer | Financial trading platforms |

| Processing Time | Time when the stream processing application handles the event | Online gaming servers |

| Ingestion Time | Time when the event enters the processing system or broker | IoT sensor networks |

Ingestion-time provides a practical solution when event time is unreliable or unavailable. However, for applications requiring strict temporal integrity, such as fraud detection or patient monitoring, event time remains the preferred choice.

Storage Time

Storage time refers to the moment when a system writes an event or record to persistent storage, such as a database, file system, or cloud storage service. This timestamp captures when the data becomes durable and available for future retrieval. Many organizations rely on storage time to track data retention, compliance, and backup operations.

In modern data architectures, storage time often differs from event time, processing time, and ingestion time. For example, a log management system may receive thousands of log entries per second. The system might buffer these logs in memory before writing them to disk. As a result, the storage time may lag behind the actual occurrence of the event or even the time the system ingested the data.

Note: Storage time can help organizations meet regulatory requirements for data retention and auditing. However, it does not reflect when the event actually happened.

Real-World Examples

Cloud Storage Services: Platforms like Amazon S3 or Google Cloud Storage assign a storage time when a file or object is successfully written. This timestamp helps users track when data became available for access or backup.

Database Systems: Relational databases such as PostgreSQL or MySQL may record a storage time for each row inserted or updated. This information supports auditing and rollback operations.

Backup and Archival Solutions: Backup software often tags files with a storage time to indicate when the backup occurred. This practice ensures organizations can restore data to a specific point in time.

Key Characteristics

Storage time provides a reliable record of data durability.

It may not match the actual event time, especially in systems with buffering or batch processing.

Storage time is critical for compliance, legal discovery, and disaster recovery planning.

Limitations

Storage time does not guarantee the order of events. If a system writes records out of sequence, the storage time may misrepresent the true sequence of events.

Analysts should avoid using storage time for time-sensitive analytics, such as fraud detection or real-time monitoring. These use cases require more accurate timestamps, like event time or ingestion time.

Organizations should choose the appropriate timestamp based on their specific needs. Storage time works well for tracking data availability and retention. However, it cannot replace event time for applications that demand precise temporal accuracy.

Event Time vs. Other Timestamps

Determinism

Determinism in stream processing refers to the ability of a system to produce the same results every time it processes the same set of events. Event time provides a deterministic approach because it uses the actual occurrence time embedded in each event. This method ensures that the system processes events in the correct order, even when they arrive late or out of sequence. Watermark mechanisms help maintain this order by signaling when it is safe to process late data. In contrast, processing time depends on the system clock and the moment the stream processor handles each event. This reliance on system timing introduces non-determinism, as factors like network delays and system load can change the order and timing of event processing. As a result, processing time can lead to inconsistent outcomes, especially when the same data is replayed or reprocessed.

| Aspect | Event Time | Processing Time |

| Basis of Time | Timestamp embedded in the event, reflecting actual occurrence time | System clock at the moment of processing |

| Determinism | Deterministic: events processed in order of actual occurrence | Non-deterministic: order and timing can vary due to system load or delays |

| Handling Out-of-Order Events | Designed to handle out-of-order events deterministically using watermarks | Does not handle out-of-order events deterministically |

| Time Progression | Depends on event timestamps and watermark mechanisms | Depends on wall clock and processing timing |

| Impact of Load/Delays | Maintains correct sequence despite delays, may add latency waiting for late events | Processing time can be delayed, causing inconsistencies under heavy load |

This comparison shows that event time ensures deterministic processing, while processing time introduces variability and unpredictability in stream analytics.

Accuracy

Accuracy in stream analytics depends on how well the system reflects the true sequence and timing of events. Event time achieves high accuracy by using the actual timestamps from the data source. This approach allows the system to handle out-of-order events and late arrivals effectively. For example, in financial trading, event time ensures that trades are analyzed in the exact order they occurred, even if network delays cause some trades to arrive late. Processing time, on the other hand, assigns timestamps based on when the system receives or processes each event. This method can misplace late events, leading to inaccurate results and distorted analytics.

| Aspect | Event Time (Source-Aligned) | Processing Time |

| Definition | Uses the actual timestamp when the event occurred, embedded in the data. | Uses the time when the event is ingested or processed by the system. |

| Accuracy | High accuracy by reflecting real-world event order; handles out-of-order and late-arriving data effectively. | Lower accuracy due to assigning events based on processing time, which can misplace late events. |

| Handling Late Data | Supports watermarking and re-windowing to correct for late or straggler events, preserving data completeness. | Does not handle late data well; late events may be ignored or misassigned, distorting results. |

| Latency | Potentially higher latency as system waits for late events to ensure completeness. | Very low latency, enabling rapid decisions and alerts. |

| Complexity | More complex implementation requiring robust timestamp management and infrastructure for event ordering. | Simpler to implement and maintain, with less overhead in event management. |

| Use Cases | Critical for compliance, auditing, historical accuracy, financial transactions, healthcare, and immutable data. | Suitable for real-time monitoring, alerting, user behavior analytics, and fast-response scenarios. |

| Business Impact | Improves analytic reliability and responsiveness by aligning with actual event occurrence times. | Prioritizes immediacy over absolute precision, useful for operational agility and rapid insights. |

Event time improves analytics by aligning processing with the true order of events. This alignment is essential for applications that require reliable and complete data, such as compliance reporting and emergency management dashboards. Processing time may suffice for less critical scenarios, but it often sacrifices accuracy for speed.

Latency

Latency measures the delay between when an event occurs and when the system processes or reacts to it. Event time processing can introduce higher latency because the system may wait for late or out-of-order events to arrive before finalizing results. This waiting period ensures data completeness and accuracy, which is vital for applications like fraud detection or financial auditing. However, this approach may not suit scenarios where immediate response is more important than perfect accuracy.

Processing time offers very low latency. The system processes each event as soon as it arrives, enabling rapid decisions and real-time alerting. This speed benefits use cases such as system monitoring, where quick detection and alerting of anomalies take priority over strict event ordering. However, the trade-off is that late or out-of-order events may be ignored or misassigned, potentially leading to incomplete or misleading analytics.

Tip: Choose event time for applications that demand accuracy and completeness, even if it means accepting higher latency. Select processing time for scenarios where low latency and immediate alerting are more important than perfect event order.

Out-of-Order Events

Out-of-order events challenge many real-time data systems. These events arrive at the processing system in a different sequence than their actual occurrence. Network delays, distributed architectures, and buffering often cause this disorder. For example, a sensor network may transmit temperature readings from multiple devices. Some readings reach the server immediately, while others experience delays due to connectivity issues.

Event time provides a robust solution for handling out-of-order events. Systems that rely on event time use the timestamp embedded in each event to reconstruct the original sequence. This approach ensures that analytics and reporting reflect the true order of events, not the order in which the system received them. Watermarks play a critical role in this process. They signal when the system can safely process late-arriving events without risking data loss or misordering.

Processing time and ingestion time struggle with out-of-order events. These methods process data as it arrives, which can lead to inaccurate results. For instance, a security monitoring system may miss a critical alert if it processes events based on arrival time rather than event time. In contrast, event time enables the system to wait for late data and maintain temporal integrity.

Note: Out-of-order events can distort analytics and decision-making. Event time helps organizations maintain accurate records and reliable insights, even in complex distributed environments.

| Handling Method | Out-of-Order Events Support | Typical Scenario |

| Event Time | Excellent | Sensor networks, financial trades |

| Processing Time | Poor | Real-time dashboards |

| Ingestion Time | Moderate | IoT data pipelines |

Use Cases

Organizations choose event time for applications that demand precise sequencing and historical accuracy. Financial trading platforms rely on event time to ensure that every transaction reflects its true execution moment. This accuracy prevents costly errors and supports regulatory compliance. Healthcare systems use event time to track patient vitals and medication administration, maintaining a reliable timeline for critical decisions.

Security analytics platforms benefit from event time by reconstructing attack timelines. Analysts can identify the exact sequence of events during a breach, even if some alerts arrive late. Manufacturing operations use event time to monitor equipment performance and detect anomalies. Accurate event sequencing helps engineers pinpoint the root cause of failures.

System monitoring tools often prioritize processing time for rapid alerting. These tools detect anomalies and trigger responses as soon as events arrive. While this approach sacrifices some accuracy, it delivers immediate feedback for operational agility.

Tip: Select event time for use cases that require historical accuracy, compliance, and detailed analysis. Choose processing time for scenarios where speed and immediate response matter most.

| Use Case | Preferred Timestamp | Reason for Choice |

| Financial Trading | Event Time | Ensures regulatory compliance and accuracy |

| Healthcare Monitoring | Event Time | Maintains reliable patient records |

| Security Analytics | Event Time | Reconstructs attack timelines |

| System Monitoring | Processing Time | Delivers rapid alerts |

| IoT Device Management | Ingestion Time | Balances speed and data flow |

Organizations must evaluate their requirements before selecting a timestamp strategy. Event time supports applications where accuracy and completeness outweigh latency. Processing time suits environments that demand instant feedback and operational speed.

Event Timestamp in Time Series Data

Temporal Integrity

Time series data relies on accurate event timestamp assignment to preserve temporal integrity. Analysts use timestamps to reconstruct the true sequence of events, which is essential for meaningful time series analytics. Regularly sampled time series data provides continuous measurements, while event-driven data often arrives at irregular intervals. This irregularity complicates analysis, making precise timestamp handling critical. Timing uncertainty or jitter can distort the measurement of similarity between event sequences. Analysts must select appropriate time intervals for analysis, as poor choices can result in empty event sequences or undefined costs in recurrence studies. Missing events and sampling gaps introduce bias, which can affect quantitative results. Specialized approaches help maintain temporal integrity, especially when comparing event-driven series with continuous measurements. Well-defined event timestamps ensure that time series data remains reliable and supports accurate analytical outcomes.

Data Consistency

Maintaining data consistency in time series data requires careful management of event timestamps. Organizations improve insert performance by using a consistent field order in documents. Batch writes with optimized parameters reduce network overhead and increase throughput. Sharding strategies, such as using a metaField as the shard key, provide sufficient cardinality and optimize data distribution. Setting appropriate bucket granularity matches the data ingestion rate, which enhances storage efficiency and query performance. Increasing the number of concurrent clients writing data boosts ingestion performance. Storing event timestamps in UTC avoids ambiguity caused by time zone changes or Daylight Saving Time. Standard formats like ISO8601 ensure clarity and prevent confusion. Associating time zones with metadata enables accurate conversions and comparisons. System-provided time zone conversion functions handle complex rules and reduce errors. Timestamp conversions should occur only when necessary to minimize complexity. Using correct data types for timestamps prevents logical errors during conversions. Understanding the relationship between time zones and offsets is vital, as offsets can change over time. Rigorous testing verifies the correctness of conversions and transformations, ensuring consistent and accurate time series data.

Time-Zone Handling

Organizations face challenges when storing and analyzing event timestamps across different time zones. Standardizing all timestamps to UTC upon ingestion ensures consistency and simplifies integration. Storing explicit time zone offsets alongside local timestamps allows accurate conversion back to local event times. Centralized timestamp handling logic and clear documentation promote uniform understanding and governance. Modern cloud-native data infrastructure and data lakehouse architectures enforce timestamp standardization and schema governance. Specialized date-time libraries manage complex calculations, including daylight saving changes and leap seconds. Rigorous testing, including simulation-based and automated regression tests, prevents deployment issues in distributed environments. Demographic surveillance research highlights the need to store temporal data with explicit precision and uncertainty, avoiding assumptions about time accuracy. Validating temporal integrity and referential integrity ensures timestamps are well-formed and logically consistent. Modifying timestamps by shifting, splitting, or changing granularity requires calculations that respect varying precision levels. Adopting standards like ISO 8601 provides uniform representation of time values, supporting reliable time series analytics.

Event-Time Processing

Event-time processing enables accurate and reliable analytics in modern data stream environments. By anchoring computations to the original event time, platforms can handle late events and out-of-order arrivals without sacrificing data quality. This approach is essential for any event streaming platform that must maintain correct event order, even when network delays or system failures introduce event-time skew.

Windowing

Windowing divides a continuous data stream into manageable segments for time-bound processing. Each window groups events based on specific criteria, allowing the platform to perform aggregations, counts, or other analytics within defined intervals.

Event Time Windows

Event time windows use the actual occurrence time of each event to assign it to the correct window. This method ensures that analytics reflect the true sequence of events, even if some data arrives late or out of order. Tumbling, sliding, and session windows are common strategies. Tumbling windows create fixed, non-overlapping intervals, while sliding windows overlap and provide continuous updates. Session windows group events based on periods of activity, capturing dynamic user behavior. Event-time based processing with these windows supports high watermark accuracy and robust time-bound processing.

Processing Time Windows

Processing time windows assign events to windows based on when the platform processes them. This approach is simpler but less accurate, as it cannot correct for event-time skew or late arrivals. While suitable for scenarios where immediate feedback is more important than precision, it may lead to misleading analytics in environments with frequent late events.

Late Arrivals

Late events occur when data arrives after the expected window has closed. These late arrivals can disrupt the chronological order of the data stream, leading to inaccurate reports and unreliable predictive models. To address this, event-time processing frameworks implement buffer periods and grace intervals, allowing the system to include late events before finalizing results. Platforms like Apache Flink and Kafka Streams offer configurable grace periods and allowed lateness settings, balancing latency and accuracy. Monitoring pipeline performance and automating retry logic further enhance the system's ability to handle late arrivals.

Watermarks

Watermarks play a critical role in event-time processing. They act as markers that indicate the progress of event time within the data stream. Watermark generation helps the platform decide when it can safely process a window, assuming no more earlier events will arrive. For example, if a watermark is set at 2:05 PM, the system processes all events up to that time, treating any later arrivals as late events. Watermark strategy and watermark accuracy directly impact how well the system manages out-of-order events and event-time skew. Apache Flink uses advanced watermark generation to track event time progress, while Kafka Streams applies grace periods to manage late data. These techniques ensure that time-bound processing remains both timely and accurate, even in complex, real-world data stream scenarios.

Tip: Choosing the right watermark strategy and configuring allowed lateness are crucial for balancing real-time responsiveness with data completeness in any event streaming platform.

Choosing the Right Timestamp

Reliability

Reliability stands as a critical factor when selecting a timestamp type for any event processing application. Distributed systems often struggle with clock synchronization. Each node may record a slightly different time, leading to inconsistencies. For example, if two nodes receive updates at nearly the same moment, their local clocks might assign timestamps that do not reflect the true order of events. This discrepancy can cause data loss or incorrect event ordering, especially when systems use last-write-wins conflict resolution.

Timestamps from unsynchronized clocks can create anomalies. Logical event order may not match the recorded timestamp order. Perfect global time synchronization remains difficult, so small discrepancies are common. To improve reliability, some organizations use centralized timestamp generation or logical clocks. These methods reduce the risk of errors caused by clock skew. Consensus algorithms in distributed datastores also help maintain consistency, offering stronger guarantees than relying on physical timestamps alone.

Note: Making users aware of timestamp limitations and enforcing consistency checks can help maintain reliable event flow in distributed environments.

Latency Needs

Latency requirements often drive the choice between event time and processing time. Event time enables accurate analytics by accounting for out-of-order and late-arriving data. However, this approach introduces latency because the system waits for straggler events to ensure correctness. In contrast, processing time processes events as they arrive, offering lower latency and operational simplicity. This method suits applications that prioritize speed over perfect accuracy.

The following table compares latency and throughput for different timestamp strategies:

| Dimension | Event Time (Pure Real-Time) | Processing Time (Micro-Batch) |

| Latency | Very low (10–500 ms) | Moderate (1–30 seconds) |

| Throughput | Moderate | High |

| Ideal Use | Time-critical decisions | High-volume analytics |

Event-driven architectures support real-time ingestion, reducing load on source systems and supporting use cases that require fresh data, such as real-time analytics and fraud detection. Poll-based ETL pipelines, on the other hand, introduce delays and are unsuitable for applications with strict latency needs.

Tip: Choose event time for mission-critical applications where analytic reliability outweighs minimal latency. Select processing time for scenarios where immediate feedback is essential.

Correctness

Correctness ensures that analytics workflows produce accurate and trustworthy results. Proper handling of time zones and timestamp standardization is essential. Without consistent time zone management, timestamps from different locations can become misaligned, causing errors. This issue becomes especially important in event-driven systems where precise sequencing is required. Using UTC and centralized timestamp standards helps maintain accuracy.

Selecting the appropriate timestamp data type also affects correctness. Using a less precise type, such as DATE, can cause loss of time information. This loss leads to inaccurate records and flawed analyses. DATETIME or TIMESTAMP types preserve both date and time, enabling precise analytics.

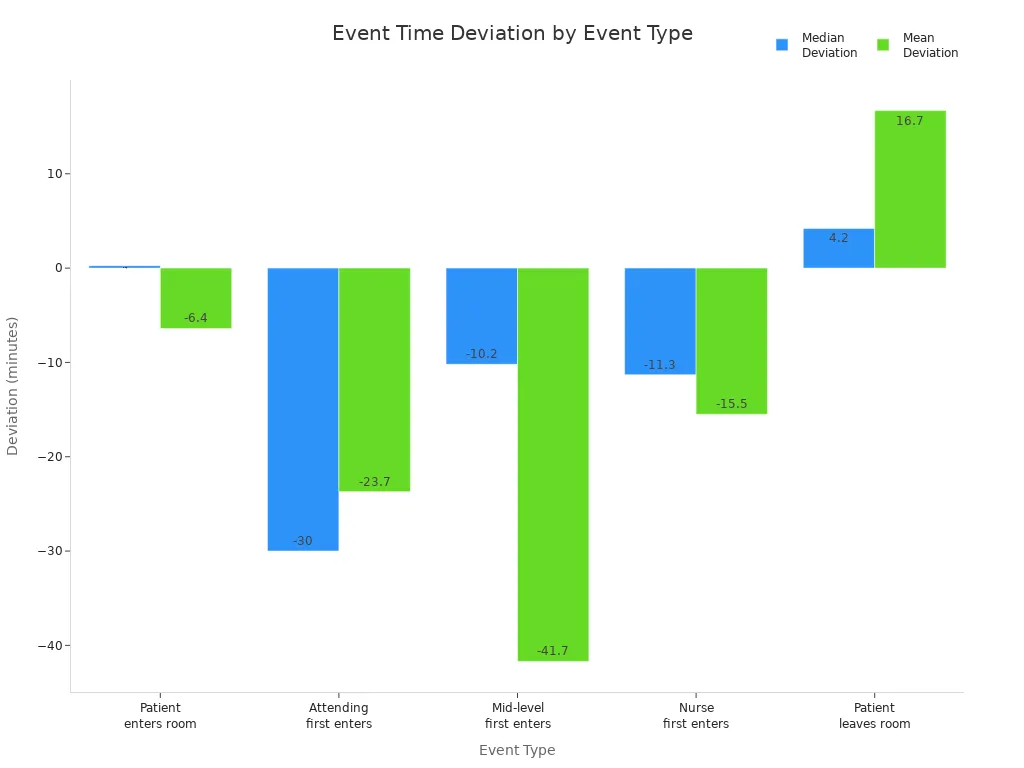

A study on emergency department tracking systems highlights how event time correctness can vary by event type. Premature or delayed recordings impact accuracy. The chart below shows median and mean deviations for different event types:

Median values often provide more reliable insights than means, which can be skewed by outliers. Organizations should validate timestamp handling and ensure that their application uses the correct data type and time zone standards to maintain analytic integrity.

Decision Guide

Selecting the right timestamp type for any data-driven application requires a structured approach. Teams can follow these steps to ensure accuracy, consistency, and future-proofing:

Capture the User’s Timezone Name

Store the full timezone name, such as 'America/Los_Angeles', instead of just an offset. This method accounts for daylight saving changes and regional differences.Accept Local Event Times

Allow users or devices to submit event times in their local timezone. This practice improves usability and reduces confusion during data entry.Convert to UTC for Storage

Standardize all event times by converting them to Coordinated Universal Time (UTC) before storage. Use a timestamp type that supports time zone awareness, such as TIMESTAMP WITH TIME ZONE. This approach ensures consistency across distributed systems.Retrieve and Display in Local Time

When presenting data, convert UTC timestamps back to the user’s local timezone. This step maintains clarity and relevance for end users.Set Database Timezone to UTC

Configure the database to use UTC as the default timezone. This setting prevents errors caused by implicit timezone conversions and supports reliable analytics.

Tip: Always enforce UTC offset zero on stored timestamps. This practice eliminates ambiguity and supports accurate event sequencing.

The following table summarizes common timestamp types and their recommended use cases:

| Timestamp Type | Description | Use Case | Timezone Handling | Precision |

| DATE | Calendar date only (YYYY-MM-DD) | Milestones, birthdays, anniversaries | No timezone | Day-level |

| TIME | Time of day only (HH:MM:SS) | Daily schedules, recurring events | No timezone | Seconds |

| DATETIME | Date and time without timezone (YYYY-MM-DD HH:MM:SS) | Civil time, logs without cross-region needs | No timezone | Microseconds |

| TIMESTAMP | Absolute point in time with timezone awareness | Precise event timing, global coordination | Timezone-aware, absolute | Microseconds |

A well-defined timestamp strategy supports reliable analytics, compliance, and seamless user experiences. Teams should review these steps at the start of every new project to avoid costly migrations or data inconsistencies later.

Event time differs from other timestamp types by capturing irregular, context-rich occurrences, while others often record regular, scheduled intervals. Accurate timestamp selection improves data quality and system reliability, as seen in industries like healthcare and finance where compliance demands precise tracking. Poor timestamp management can cause outages, misaligned alerts, and analytic errors.

Quick Decision Checklist:

Identify event type and required precision

Standardize time zones and formats

Align timestamp choice with compliance needs

Monitor for anomalies and update processes regularly

FAQ

What is the main advantage of using event time in analytics?

Event time provides accurate sequencing of events. Analysts can trust the timeline, even when data arrives late or out of order. This accuracy supports compliance and reliable reporting.

How does processing time differ from event time?

Processing time records when a system handles an event. Event time captures when the event actually occurred. Processing time may distort the true order if delays happen.

Why do organizations standardize timestamps to UTC?

UTC eliminates confusion from time zones and daylight saving changes. Teams achieve consistency across global systems. This practice simplifies data integration and analysis.

Can event time handle late-arriving data?

Yes. Event time frameworks use watermarks and grace periods to include late events. This approach maintains data completeness and prevents loss of important information.

Which timestamp type suits real-time alerting best?

Processing time works well for real-time alerting. Systems react instantly to incoming data. This method prioritizes speed over historical accuracy.

What challenges arise with out-of-order events?

Out-of-order events can disrupt analytics. Event time helps restore the correct sequence. Systems using only processing or ingestion time may report misleading results.

How should teams choose the right timestamp type?

Teams should assess accuracy needs, latency requirements, and compliance standards. A decision checklist helps select the best timestamp type for each use case.

Subscribe to my newsletter

Read articles from Community Contribution directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by