Latest Developments in Incremental Computation You Should Know

Community Contribution

Community Contribution

Incremental computation now helps make technology work faster and better. Developers notice new changes as incremental computation grows with ai, edge devices, and new quantum methods. These changes make things run smoother and easier to use in real life. Businesses get answers faster and can change quickly in tough markets.

Incremental computation changes how teams fix problems in today’s digital world.

Key Takeaways

Incremental computation changes only the parts that are different. This makes systems work faster and saves resources.

New algorithms help things go faster and be more correct. They do this in AI, image processing, and data analysis. They handle changes in a smart way.

Edge computing and AI use incremental computation to work with data fast. They do this close to where the data starts. This cuts down on delays and keeps data more private.

Quantum computing uses incremental methods to work with big data. It helps learning go faster and uses less memory.

Businesses and developers should keep learning about incremental computation. They should use it to stay ahead and deal with fast-changing technology.

Incremental Computation Trends

Algorithms

Researchers are making new algorithms for incremental computation. These algorithms help technology work better and faster. In 2024, scientists made an algorithm for period sets in stringology, code design, and bioinformatics. It builds a longer word’s set from a shorter word’s set. This saves memory and lets people study data as it changes. The algorithm checks if period sets are valid, so results are more reliable.

Another new method uses local neighborhood rough sets and composite entropy for feature selection. It uses matrix math and parallel computing to handle big, changing data sets. This method changes fast when data changes. It makes programs run quicker and more accurately. In image processing, a new algorithm finds contours faster. It counts contour pixel edges instead of rebuilding nodes. This works better than old methods.

The table below shows these new algorithms:

| Algorithm Name/Focus | Domain/Application | Key Innovation | Benefits |

| Incremental computation of the set of period sets | Stringology, code design, bioinformatics | Builds period sets incrementally, reduces space complexity | Enables dynamic analysis, improves verification with constructive certification |

| Incremental feature selection with local neighborhood rough sets and composite entropy | Feature selection in dynamic data | Matrix operations, parallel computing | Efficient for large-scale data, adapts to changes, improves accuracy |

| Incremental component tree contour computation | Image processing, component tree analysis | Counts contour pixel edges incrementally | Faster runtime, detailed time complexity analysis, practical applications |

These new algorithms show a big change in technology for 2025. Incremental computation helps systems work faster and better. Measuring incrementality and checking solutions keeps them strong and trustworthy.

Space Complexity

Space complexity is very important in incremental computation. As data gets bigger, saving memory matters more. New studies try to use less memory but still work well. The period set algorithm builds sets step by step. This uses much less memory than older ways.

Constructive certification is also important. The algorithm makes a binary string that matches the period set. It starts with the biggest period and builds suffixes that fit the rules. The algorithm checks for mistakes, like wrong suffix sizes. It only makes valid period sets. This keeps results correct and reliable. It works in linear time, so it is fast. Here are the steps for constructive certification:

Makes a binary string for the period set.

Builds suffixes from the biggest period down.

Checks for mistakes in suffix sizes.

Only makes valid period sets.

Works quickly with linear time.

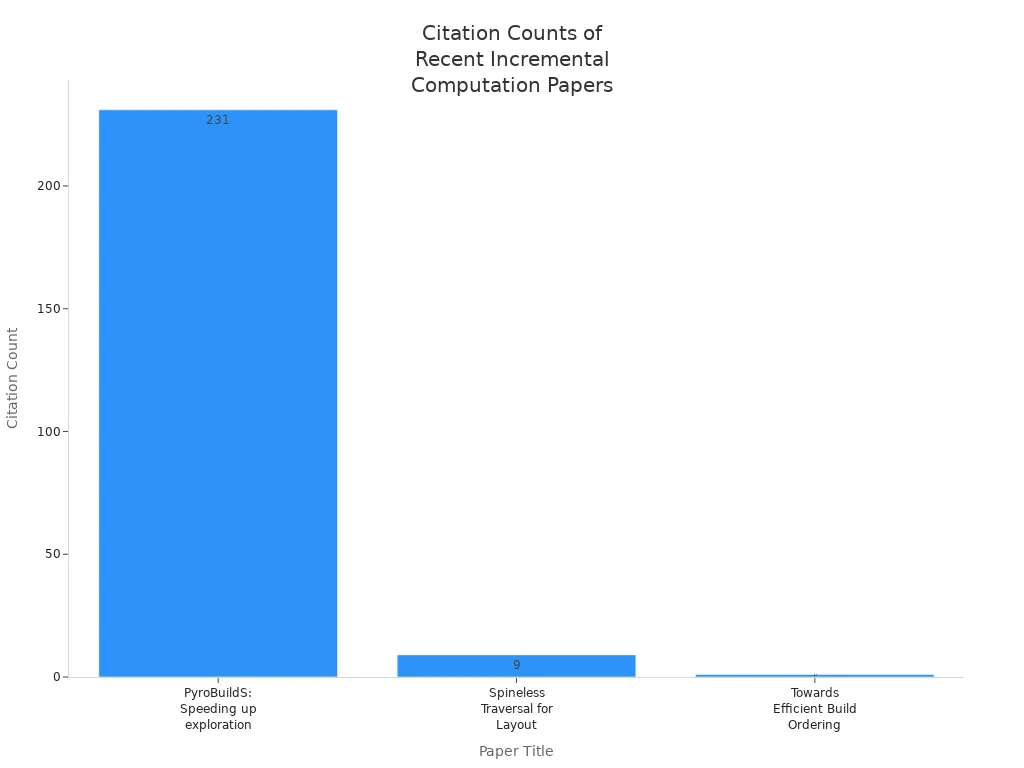

The most cited papers show how much interest there is in incremental computation. The table below lists important papers and their citation numbers:

| Paper Title | Publication Year | Citation Count |

| PyroBuildS: Speeding up the exploration of large configuration spaces with incremental build | 2026 | 231 |

| Spineless Traversal for Layout Invalidation | 2025 | 9 |

| AUTOINC: Incrementality for Free | 2024 | N/A |

| Towards Efficient Build Ordering for Incremental Builds with Multiple Configurations | 2024 | 1 |

These papers show that more people are studying incremental computation. Most new ideas are in data and analytics. Technology trends push for lower costs, faster answers, and more updates. Real-time OLAP, cloud data warehouses, and stream processing now use incremental methods. These methods help save money, lower wait times, and refresh data more often.

Note: Incremental computation is most popular in data and analytics. Quantum and edge computing use other models. Data-driven technology is leading the way for analytics and operations.

Technology Trends

AI Integration

AI is changing technology in 2025. Incremental computation is now very important for AI. Teams use it to update models fast when new data comes in. This helps AI learn and change in real time. It is needed for things like recommendation engines and smart robots. AI systems train faster and respond better. Developers get quick feedback and better predictions. Incremental computation lets AI work with big data sets. It does not need to start over each time. This makes AI work better and grow in many fields.

Edge Computing

Edge computing is now a big part of technology. Incremental computation helps edge devices by working on data close to where it is made. It updates results as new data comes in. This cuts down on wait times and helps make fast choices. The table below shows the main good things about using incremental computation at the edge:

| Benefit | Explanation |

| Reduced Latency | Working on data near the source means less waiting. This is important for things like self-driving cars. |

| Enhanced Data Privacy & Security | Local data work keeps data safer. It does not travel far and follows strict rules. |

| Real-Time Processing | Edge devices can look at data and act right away. This is key for robots and factories. |

| Lower Bandwidth Usage | Local work means less data sent to the cloud. This saves space and money. |

| Improved Reliability & Resilience | Edge devices keep working even if the network goes down. This keeps things running and safe. |

| Scalability & Flexibility | You can add more edge devices slowly. This helps different places and needs. |

| Cost Efficiency | Local data work costs less. It is good for IoT and sensors. |

Edge devices can learn new things and save data nearby. This keeps data private and makes devices react faster, which is great for IoT.

Quantum Influence

Quantum computing teams use incremental computation to work better. The Quantum Incremental Clustering Algorithm System (QICAS) changes old clustering for quantum computers. QICAS uses special quantum tricks like superposition and entanglement. This helps with big and changing data. It makes learning faster and more correct. Quantum computers like Amazon Braket SV1 and Rigetti Aspen 9 help with these new ideas. Teams also try mixing quantum and regular computers and use quantum compression. These tricks use less memory and help systems grow. Quantum attention methods make things run faster and use less power.

Quantum teams mix quantum and regular computers for better work.

Quantum attention makes things faster and saves energy.

Superposition and entanglement let computers look at lots of data at once.

These new ideas help machine learning and data work in quantum computers.

Incremental computation keeps making AI, edge, and quantum systems smarter and faster.

Real-World Applications

Retail Media Predictions

Retail media networks use incremental computation to help brands and advertisers. Instacart uses it to give brands real-time campaign insights. Brands can now see how ads are doing right away. This helps them change their plans quickly. Retail media networks become more open and honest. They bring together different platforms and give brands useful data.

In 2025, retail media will use omnichannel incrementality models. These models use clean and sorted data for reliable updates. They help find the real effect of ad campaigns. Retail media players compare sales in stores with and without ads. This is like a natural test. Brands can measure ad returns and incremental ROAS without fake setups. Brands make better choices and spend money smarter across channels.

Note: Incremental computation lets retail media networks give real-time, accurate insights. This helps brands get better results from their ads and spend money wisely.

Data Engineering

Data engineering teams use incremental computation to keep data pipelines fast. In genomics, scientists only update the parts they need. This saves time and money. It also makes genetic diagnosis faster. Teams check what data needs updating. They do not rerun the whole pipeline, so they save resources.

Uber’s data team uses incremental ETL with Apache Hudi. When driver tips come in late, only those records update. This keeps all data correct without redoing months of work. Uber Eats menu changes also use this method. It makes updates faster and easier. Incremental computation keeps data fresh and lowers wait times. It also helps data lakes grow and handle more work.

Data engineering uses for incremental computation:

Running queries on data streams all the time

Reusing old work with tools like DBSP

Using better query languages, not just old ones

These new ways help companies save money and improve data. Incremental computation lets teams make small changes and react fast to new chances.

Autonomous Agents

Autonomous agents use incremental computation to make better choices. They add new skills in steps, which lowers risk. Feedback helps them learn and get better over time.

Many agents work together using smart communication and control. This helps them make choices and do hard jobs as a team. Agents must keep learning in changing places. They use learning and fixing mistakes to stay strong.

| Approach | Description | Incremental Computation Role |

| Agentic Pretraining | Agents learn which tool to use at each step. | Helps agents gain knowledge and make better choices. |

| Agentic Supervised Fine-tuning | Agents improve decisions with special data sets. | Lets agents learn step by step and act smarter. |

| Agentic Reinforcement Learning | Agents get better by trying and learning from feedback. | Matches incremental computation for always getting better. |

Incremental computation is also important for blockchains and machine learning. Some methods let clients check work even as systems get bigger. These ways help agents work safely and adapt in real life.

Tip: Incremental computation helps agents learn and work together in real time. This makes them better in changing situations.

Performance & Usability

Benchmarks

Performance benchmarks show that incremental computation is much faster than old ways. For example, the Incremental CodeQL project showed updates finish in seconds, even with big code changes. Full re-analysis takes a lot longer. This speed helps teams act fast when data or code changes. But there are some downsides. Setting up can take up to an hour. Memory use can be very high, sometimes tens of gigabytes. Teams need to think about fast updates and high resource use at the start.

Benchmarks in different areas show how helpful incremental methods are:

| Application Domain | Benchmark Results | Key Insights |

| Computational Chemistry | Error margin below 1 kcal/mol; big savings in CPU and memory | Parallelization works well up to 50 CPUs |

| Static Program Analysis | Speedups from 1.48x to 5.13x over non-incremental methods | Only changed code needs re-analysis |

| Natural Language Processing | Up to 97.89% accuracy with stable memory use | Learns new data quickly and keeps vocabulary relevant |

Teams get faster results and use resources better, but must plan for more memory use at the start.

Developer Experience

Developer experience is better as incremental tools get easier to use. Teams test early versions and ask for feedback. They use surveys, workshops, and online forums to get ideas. Analytics help find where users have trouble. These steps make tools simpler and work smoother.

User-centered design helps new users learn tools.

Training and support now use real advice from experts.

Product updates match what users and the industry need.

Workshops and updates help users learn new things.

Focusing on usability makes people happier at work.

Academic research also helps make better tools. Prototypes and feedback help teams build good interfaces. This way of always improving builds strong support and makes users more satisfied.

Future Directions

Challenges

Incremental computation has some big problems to solve. Many developers find it hard to think in small steps. They like using full inputs, which slows things down and wastes resources. Making systems with APIs that are simple but still helpful is tough. Databases do not work well with incremental view maintenance. They were made for one-time questions, not for updates that keep happening. Writing your own code for this can cause mistakes and make fixing things harder. For example, keeping track of "unread messages" can break when updates come fast. Many databases still do not use incremental systems. They keep repeating the same data jobs. This means there are still real and idea problems to fix.

Key challenges in incremental computation:

Developers like full inputs, which is not efficient.

APIs must be easy and useful at the same time.

Databases are not made for updates that never stop.

Custom code can cause more mistakes and work.

Not many databases use incremental systems yet.

Predictions

Experts think incremental computation will keep getting better in the next five years. It will work more with AI, especially in Retrieval-Augmented Generation (RAG). RAG will help agents remember and think step by step. Reflection-driven reasoning will let agents give smarter answers. RAG did not change much in 2025, but it is now more important for handling messy data and agent memory. The future will focus on how workflows and memory work together, not just on single tools. Big companies are getting ready by teaching teams to learn all the time and try new things. They use AI to help people learn in ways that fit them best. Teams are learning more skills and working together across jobs. Planning now means thinking about today and what comes next. Leaders also look at how AI, quantum computing, robotics, and other tech will work as one. This mix will bring new chances and needs smart rules and spending.

The next big step for incremental computation will come from slow improvements, working more with AI, and new ideas in many fields.

| Future Focus Area | Expected Impact |

| Agentic RAG and workflows | Smarter, step-by-step thinking in AI systems |

| Cross-technology synergy | Faster new ideas and business chances |

| Continuous learning | Teams keep up with changing technology |

Incremental computation is a big part of technology in 2025. It helps developers and businesses get updates faster. Systems become smarter and use resources better. Leaders say you should do a few things to keep up:

Go to events and talk to people online.

Use digital tools and make choices with data.

| Resource Allocation | Focus Area | Description |

| 70% | Incremental Innovations | Make small changes often to stay ahead. |

| 20% | Adjacent Innovations | Try new markets or use your skills in new ways. |

| 10% | Transformative Innovations | Spend on big projects that could change everything. |

Knowing what’s new helps teams change when new technology comes out.

FAQ

What is incremental computation?

Incremental computation only updates the parts that change. This way, it saves both time and resources. Developers use it to keep systems running fast.

Why do businesses care about incremental computation?

Businesses want answers quickly and to spend less money. Incremental computation helps teams update things faster. This means better choices and happier customers.

Does incremental computation work with AI and edge devices?

Yes, it does. AI models and edge devices use it to handle new data fast. This helps them update in real time and get smarter.

What challenges do developers face with incremental computation?

Developers have trouble with hard-to-use APIs and old systems. They need to learn new tools and ways of working. Teams must get training and help to use incremental computation well.

Subscribe to my newsletter

Read articles from Community Contribution directly inside your inbox. Subscribe to the newsletter, and don't miss out.

Written by